AutoInit: Analytic Signal-Preserving Weight Initialization for Neural Networks

Paper and Code

Sep 18, 2021

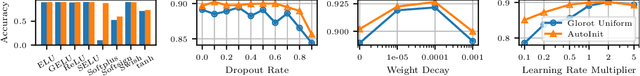

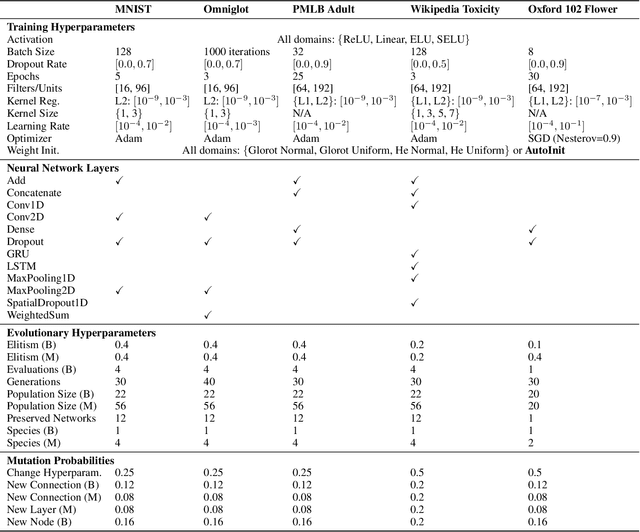

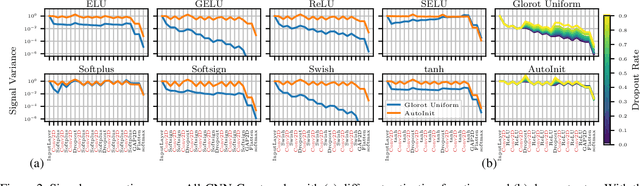

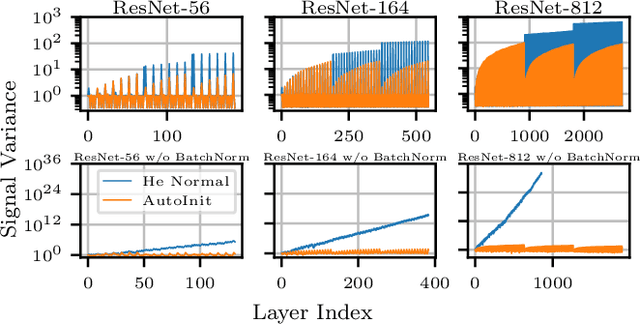

Neural networks require careful weight initialization to prevent signals from exploding or vanishing. Existing initialization schemes solve this problem in specific cases by assuming that the network has a certain activation function or topology. It is difficult to derive such weight initialization strategies, and modern architectures therefore often use these same initialization schemes even though their assumptions do not hold. This paper introduces AutoInit, a weight initialization algorithm that automatically adapts to different neural network architectures. By analytically tracking the mean and variance of signals as they propagate through the network, AutoInit is able to appropriately scale the weights at each layer to avoid exploding or vanishing signals. Experiments demonstrate that AutoInit improves performance of various convolutional and residual networks across a range of activation function, dropout, weight decay, learning rate, and normalizer settings. Further, in neural architecture search and activation function meta-learning, AutoInit automatically calculates specialized weight initialization strategies for thousands of unique architectures and hundreds of unique activation functions, and improves performance in vision, language, tabular, multi-task, and transfer learning scenarios. AutoInit thus serves as an automatic configuration tool that makes design of new neural network architectures more robust. The AutoInit package provides a wrapper around existing TensorFlow models and is available at https://github.com/cognizant-ai-labs/autoinit.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge