Attentional Policies for Cross-Context Multi-Agent Reinforcement Learning

Paper and Code

May 31, 2019

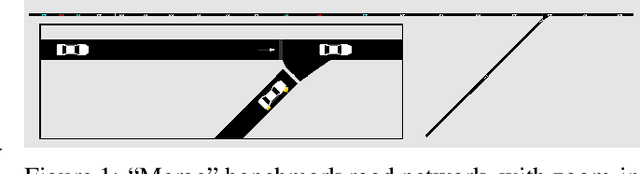

Many potential applications of reinforcement learning in the real world involve interacting with other agents whose numbers vary over time. We propose new neural policy architectures for these multi-agent problems. In contrast to other methods of training an individual, discrete policy for each agent and then enforcing cooperation through some additional inter-policy mechanism, we follow the spirit of recent work on the power of relational inductive biases in deep networks by learning multi-agent relationships at the policy level via an attentional architecture. In our method, all agents share the same policy, but independently apply it in their own context to aggregate the other agents' state information when selecting their next action. The structure of our architectures allow them to be applied on environments with varying numbers of agents. We demonstrate our architecture on a benchmark multi-agent autonomous vehicle coordination problem, obtaining superior results to a full-knowledge, fully-centralized reference solution, and significantly outperforming it when scaling to large numbers of agents.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge