Asymmetric Learning for Graph Neural Network based Link Prediction

Paper and Code

Mar 01, 2023

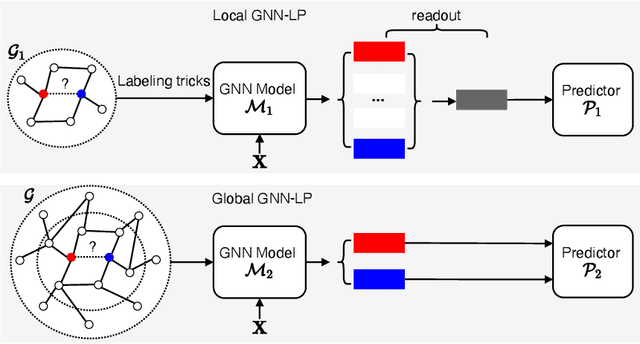

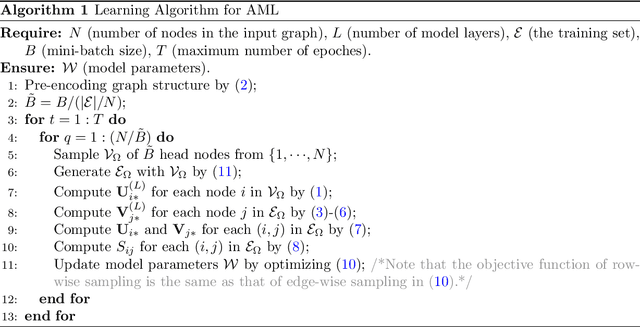

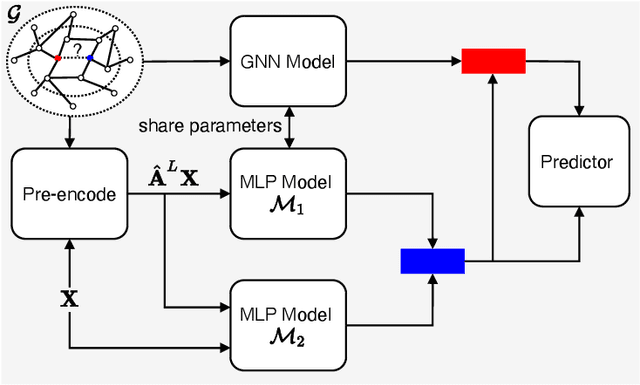

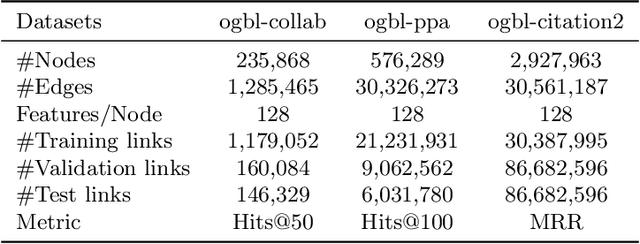

Link prediction is a fundamental problem in many graph based applications, such as protein-protein interaction prediction. Graph neural network (GNN) has recently been widely used for link prediction. However, existing GNN based link prediction (GNN-LP) methods suffer from scalability problem during training for large-scale graphs, which has received little attention by researchers. In this paper, we first give computation complexity analysis of existing GNN-LP methods, which reveals that the scalability problem stems from their symmetric learning strategy adopting the same class of GNN models to learn representation for both head and tail nodes. Then we propose a novel method, called asymmetric learning (AML), for GNN-LP. The main idea of AML is to adopt a GNN model for learning head node representation while using a multi-layer perceptron (MLP) model for learning tail node representation. Furthermore, AML proposes a row-wise sampling strategy to generate mini-batch for training, which is a necessary component to make the asymmetric learning strategy work for training speedup. To the best of our knowledge, AML is the first GNN-LP method adopting an asymmetric learning strategy for node representation learning. Experiments on three real large-scale datasets show that AML is 1.7X~7.3X faster in training than baselines with a symmetric learning strategy, while having almost no accuracy loss.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge