Assessing and Accelerating Coverage in Deep Reinforcement Learning

Paper and Code

Dec 01, 2020

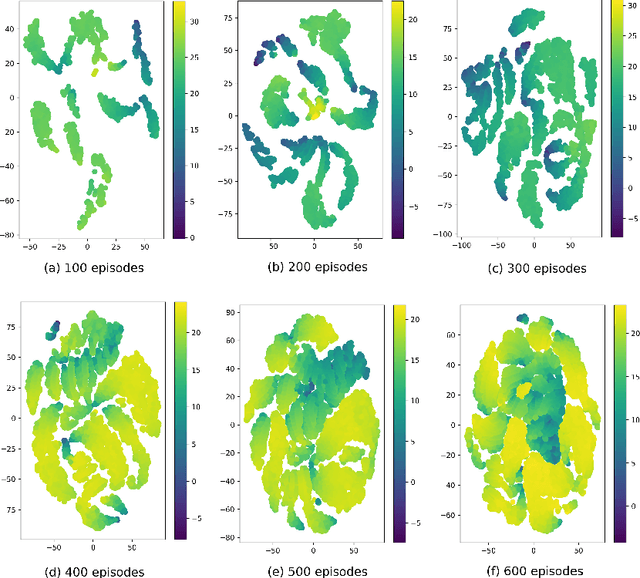

Current deep reinforcement learning (DRL) algorithms utilize randomness in simulation environments to assume complete coverage in the state space. However, particularly in high dimensions, relying on randomness may lead to gaps in coverage of the trained DRL neural network model, which in turn may lead to drastic and often fatal real-world situations. To the best of the author's knowledge, the assessment of coverage for DRL is lacking in current research literature. Therefore, in this paper, a novel measure, Approximate Pseudo-Coverage (APC), is proposed for assessing the coverage in DRL applications. We propose to calculate APC by projecting the high dimensional state space on to a lower dimensional manifold and quantifying the occupied space. Furthermore, we utilize an exploration-exploitation strategy for coverage maximization using Rapidly-Exploring Random Tree (RRT). The efficacy of the assessment and the acceleration of coverage is demonstrated on standard tasks such as Cartpole, highway-env.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge