Approximation Capabilities of Neural Networks using Morphological Perceptrons and Generalizations

Paper and Code

Jul 16, 2022

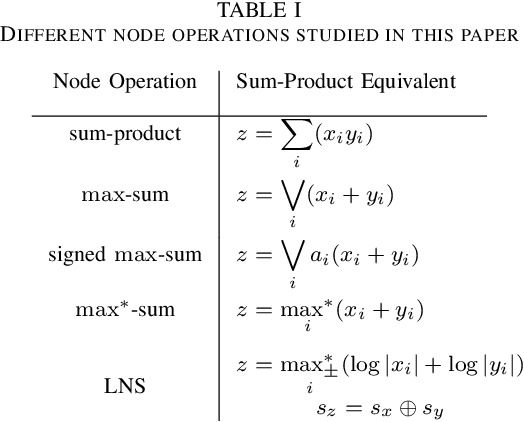

Standard artificial neural networks (ANNs) use sum-product or multiply-accumulate node operations with a memoryless nonlinear activation. These neural networks are known to have universal function approximation capabilities. Previously proposed morphological perceptrons use max-sum, in place of sum-product, node processing and have promising properties for circuit implementations. In this paper we show that these max-sum ANNs do not have universal approximation capabilities. Furthermore, we consider proposed signed-max-sum and max-star-sum generalizations of morphological ANNs and show that these variants also do not have universal approximation capabilities. We contrast these variations to log-number system (LNS) implementations which also avoid multiplications, but do exhibit universal approximation capabilities.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge