Analytic Characterization of the Hessian in Shallow ReLU Models: A Tale of Symmetry

Paper and Code

Aug 04, 2020

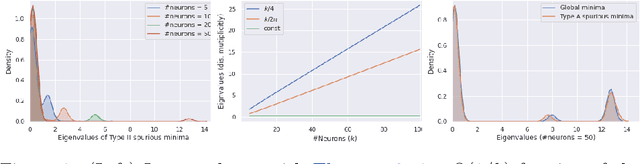

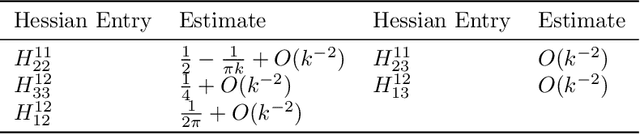

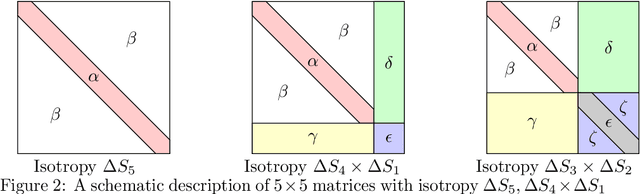

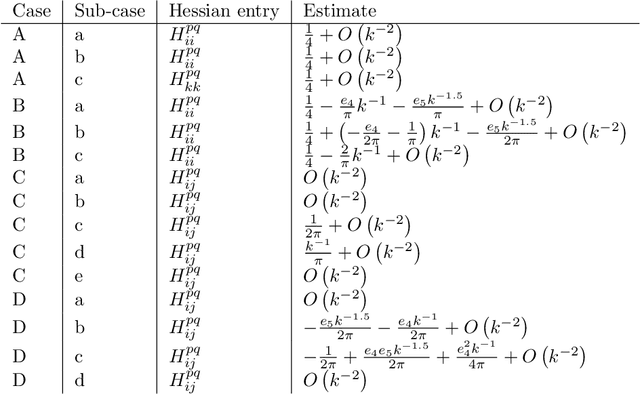

We consider the optimization problem associated with fitting two-layers ReLU networks with $k$ neurons. We leverage the rich symmetry structure to analytically characterize the Hessian and its spectral density at various families of spurious local minima. In particular, we prove that for standard $d$-dimensional Gaussian inputs with $d\ge k$: (a) of the $dk$ eigenvalues corresponding to the weights of the first layer, $dk - O(d)$ concentrate near zero, (b) $\Omega(d)$ of the remaining eigenvalues grow linearly with $k$. Although this phenomenon of extremely skewed spectrum has been observed many times before, to the best of our knowledge, this is the first time it has been established rigorously. Our analytic approach uses techniques, new to the field, from symmetry breaking and representation theory, and carries important implications for our ability to argue about statistical generalization through local curvature.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge