Alternative Function Approximation Parameterizations for Solving Games: An Analysis of $f$-Regression Counterfactual Regret Minimization

Paper and Code

Dec 06, 2019

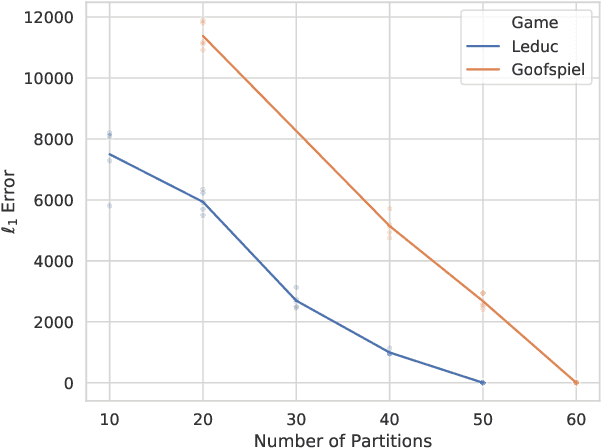

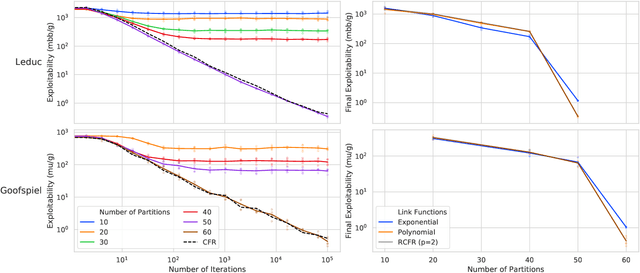

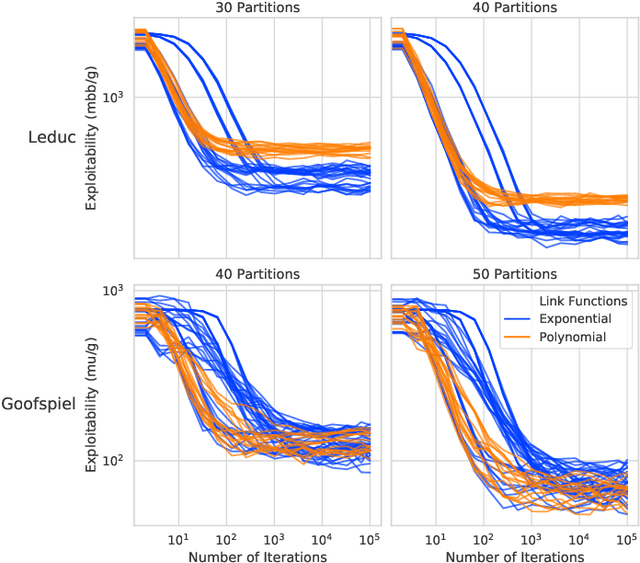

Function approximation is a powerful approach for structuring large decision problems that has facilitated great achievements in the areas of reinforcement learning and game playing. Regression counterfactual regret minimization (RCFR) is a flexible and simple algorithm for approximately solving imperfect information games with policies parameterized by a normalized rectified linear unit (ReLU). In contrast, the more conventional softmax parameterization is standard in the field of reinforcement learning and has a regret bound with a better dependence on the number of actions in the tabular case. We derive approximation error-aware regret bounds for $(\Phi, f)$-regret matching, which applies to a general class of link functions and regret objectives. These bounds recover a tighter bound for RCFR and provides a theoretical justification for RCFR implementations with alternative policy parameterizations ($f$-RCFR), including softmax. We provide exploitability bounds for $f$-RCFR with the polynomial and exponential link functions in zero-sum imperfect information games, and examine empirically how the link function interacts with the severity of the approximation to determine exploitability performance in practice. Although a ReLU parameterized policy is typically the best choice, a softmax parameterization can perform as well or better in settings that require aggressive approximation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge