Agnostic Sample Compression for Linear Regression

Paper and Code

Oct 03, 2018

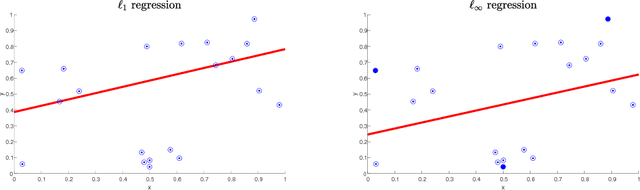

We obtain the first positive results for bounded sample compression in the agnostic regression setting. We show that for p in {1,infinity}, agnostic linear regression with $\ell_p$ loss admits a bounded sample compression scheme. Specifically, we exhibit efficient sample compression schemes for agnostic linear regression in $R^d$ of size $d+1$ under the $\ell_1$ loss and size $d+2$ under the $\ell_\infty$ loss. We further show that for every other $\ell_p$ loss (1 < p < infinity), there does not exist an agnostic compression scheme of bounded size. This refines and generalizes a negative result of David, Moran, and Yehudayoff (2016) for the $\ell_2$ loss. We close by posing a general open question: for agnostic regression with $\ell_1$ loss, does every function class admit a compression scheme of size equal to its pseudo-dimension? This question generalizes Warmuth's classic sample compression conjecture for realizable-case classification (Warmuth, 2003).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge