A Uniform Framework for Anomaly Detection in Deep Neural Networks

Paper and Code

Oct 06, 2021

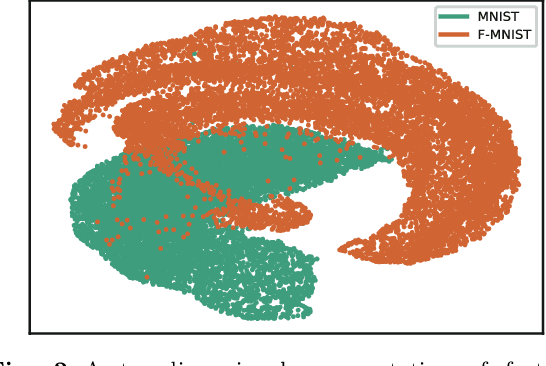

Deep neural networks (DNN) can achieve high performance when applied to In-Distribution (ID) data which come from the same distribution as the training set. When presented with anomaly inputs not from the ID, the outputs of a DNN should be regarded as meaningless. However, modern DNN often predict anomaly inputs as an ID class with high confidence, which is dangerous and misleading. In this work, we consider three classes of anomaly inputs, (1) natural inputs from a different distribution than the DNN is trained for, known as Out-of-Distribution (OOD) samples, (2) crafted inputs generated from ID by attackers, often known as adversarial (AD) samples, and (3) noise (NS) samples generated from meaningless data. We propose a framework that aims to detect all these anomalies for a pre-trained DNN. Unlike some of the existing works, our method does not require preprocessing of input data, nor is it dependent to any known OOD set or adversarial attack algorithm. Through extensive experiments over a variety of DNN models for the detection of aforementioned anomalies, we show that in most cases our method outperforms state-of-the-art anomaly detection methods in identifying all three classes of anomalies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge