A Spiking Neural Network Structure Implementing Reinforcement Learning

Paper and Code

Apr 09, 2022

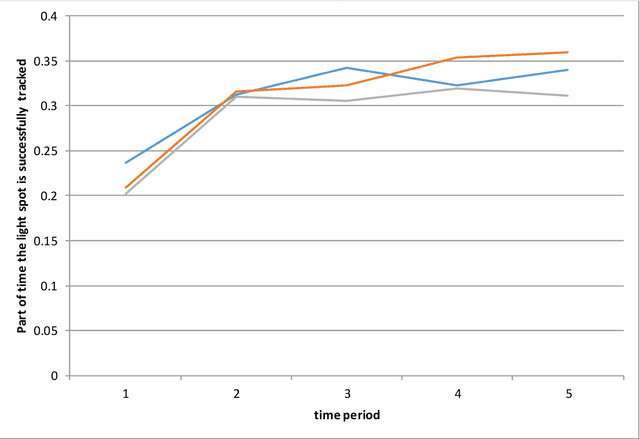

At present, implementation of learning mechanisms in spiking neural networks (SNN) cannot be considered as a solved scientific problem despite plenty of SNN learning algorithms proposed. It is also true for SNN implementation of reinforcement learning (RL), while RL is especially important for SNNs because of its close relationship to the domains most promising from the viewpoint of SNN application such as robotics. In the present paper, I describe an SNN structure which, seemingly, can be used in wide range of RL tasks. The distinctive feature of my approach is usage of only the spike forms of all signals involved - sensory input streams, output signals sent to actuators and reward/punishment signals. Besides that, selecting the neuron/plasticity models, I was guided by the requirement that they should be easily implemented on modern neurochips. The SNN structure considered in the paper includes spiking neurons described by a generalization of the LIFAT (leaky integrate-and-fire neuron with adaptive threshold) model and a simple spike timing dependent synaptic plasticity model (a generalization of dopamine-modulated plasticity). My concept is based on very general assumptions about RL task characteristics and has no visible limitations on its applicability. To test it, I selected a simple but non-trivial task of training the network to keep a chaotically moving light spot in the view field of an emulated DVS camera. Successful solution of this RL problem by the SNN described can be considered as evidence in favor of efficiency of my approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge