A probabilistic generative model for semi-supervised training of coarse-grained surrogates and enforcing physical constraints through virtual observables

Paper and Code

Jun 02, 2020

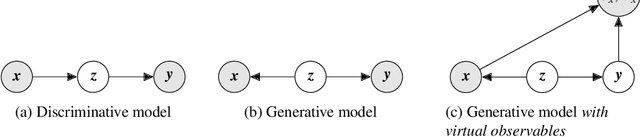

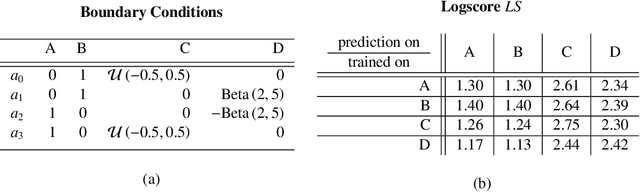

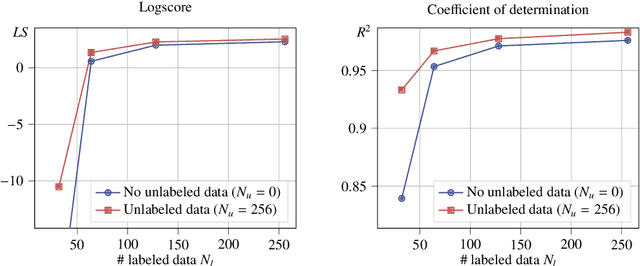

The data-centric construction of inexpensive surrogates for fine-grained, physical models has been at the forefront of computational physics due to its significant utility in many-query tasks such as uncertainty quantification. Recent efforts have taken advantage of the enabling technologies from the field of machine learning (e.g. deep neural networks) in combination with simulation data. While such strategies have shown promise even in higher-dimensional problems, they generally require large amounts of training data even though the construction of surrogates is by definition a Small Data problem. Rather than employing data-based loss functions, it has been proposed to make use of the governing equations (in the simplest case at collocation points) in order to imbue domain knowledge in the training of the otherwise black-box-like interpolators. The present paper provides a flexible, probabilistic framework that accounts for physical structure and information both in the training objectives as well as in the surrogate model itself. We advocate a probabilistic (Bayesian) model in which equalities that are available from the physics (e.g. residuals, conservation laws) can be introduced as virtual observables and can provide additional information through the likelihood. We further advocate a generative model i.e. one that attempts to learn the joint density of inputs and outputs that is capable of making use of unlabeled data (i.e. only inputs) in a semi-supervised fashion in order to promote the discovery of lower-dimensional embeddings which are nevertheless predictive of the fine-grained model's output.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge