A Hybrid Framework for Sequential Data Prediction with End-to-End Optimization

Paper and Code

Mar 25, 2022

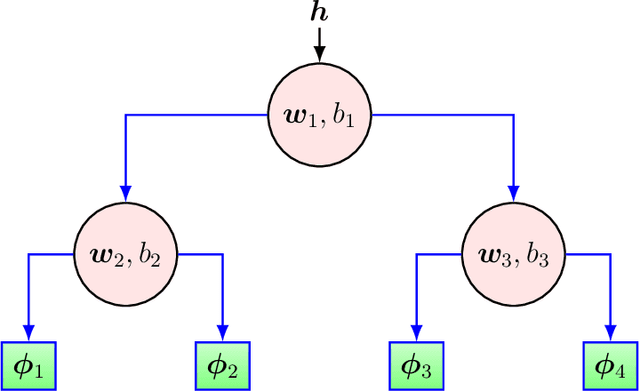

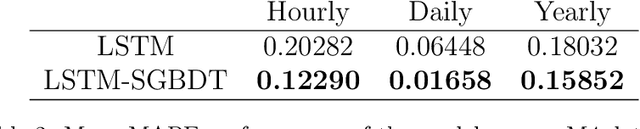

We investigate nonlinear prediction in an online setting and introduce a hybrid model that effectively mitigates, via an end-to-end architecture, the need for hand-designed features and manual model selection issues of conventional nonlinear prediction/regression methods. In particular, we use recursive structures to extract features from sequential signals, while preserving the state information, i.e., the history, and boosted decision trees to produce the final output. The connection is in an end-to-end fashion and we jointly optimize the whole architecture using stochastic gradient descent, for which we also provide the backward pass update equations. In particular, we employ a recurrent neural network (LSTM) for adaptive feature extraction from sequential data and a gradient boosting machinery (soft GBDT) for effective supervised regression. Our framework is generic so that one can use other deep learning architectures for feature extraction (such as RNNs and GRUs) and machine learning algorithms for decision making as long as they are differentiable. We demonstrate the learning behavior of our algorithm on synthetic data and the significant performance improvements over the conventional methods over various real life datasets. Furthermore, we openly share the source code of the proposed method to facilitate further research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge