A Framework For Pruning Deep Neural Networks Using Energy-Based Models

Paper and Code

Feb 25, 2021

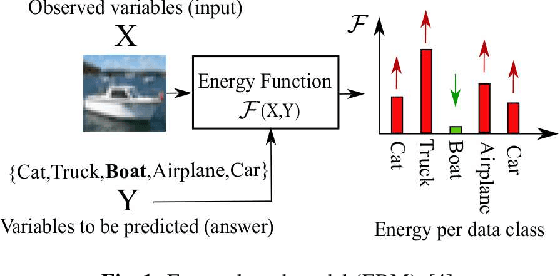

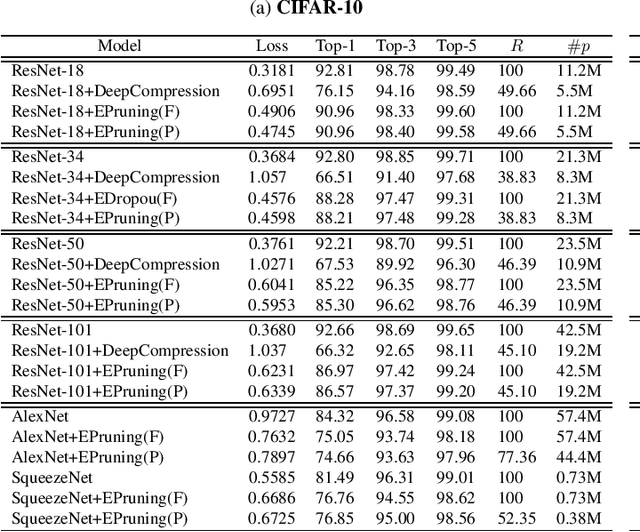

A typical deep neural network (DNN) has a large number of trainable parameters. Choosing a network with proper capacity is challenging and generally a larger network with excessive capacity is trained. Pruning is an established approach to reducing the number of parameters in a DNN. In this paper, we propose a framework for pruning DNNs based on a population-based global optimization method. This framework can use any pruning objective function. As a case study, we propose a simple but efficient objective function based on the concept of energy-based models. Our experiments on ResNets, AlexNet, and SqueezeNet for the CIFAR-10 and CIFAR-100 datasets show a pruning rate of more than $50\%$ of the trainable parameters with approximately $<5\%$ and $<1\%$ drop of Top-1 and Top-5 classification accuracy, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge