A Deep Neural Networks ensemble workflow from hyperparameter search to inference leveraging GPU clusters

Paper and Code

Aug 30, 2022

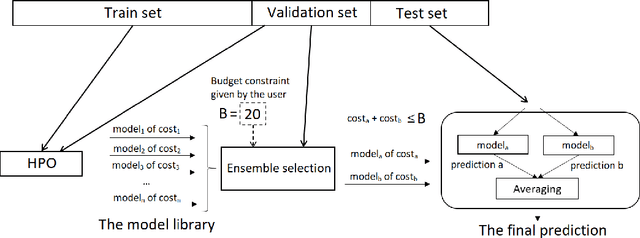

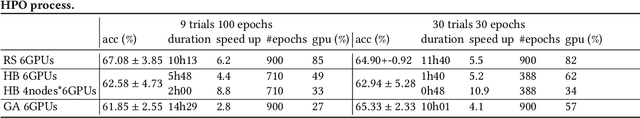

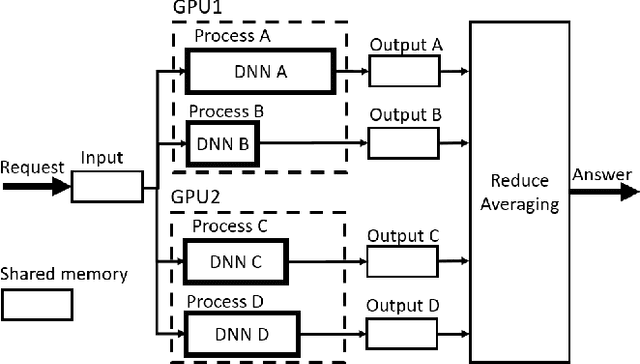

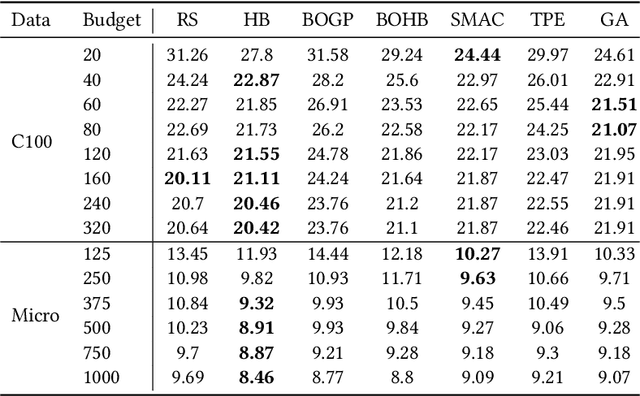

Automated Machine Learning with ensembling (or AutoML with ensembling) seeks to automatically build ensembles of Deep Neural Networks (DNNs) to achieve qualitative predictions. Ensemble of DNNs are well known to avoid over-fitting but they are memory and time consuming approaches. Therefore, an ideal AutoML would produce in one single run time different ensembles regarding accuracy and inference speed. While previous works on AutoML focus to search for the best model to maximize its generalization ability, we rather propose a new AutoML to build a larger library of accurate and diverse individual models to then construct ensembles. First, our extensive benchmarks show asynchronous Hyperband is an efficient and robust way to build a large number of diverse models to combine them. Then, a new ensemble selection method based on a multi-objective greedy algorithm is proposed to generate accurate ensembles by controlling their computing cost. Finally, we propose a novel algorithm to optimize the inference of the DNNs ensemble in a GPU cluster based on allocation optimization. The produced AutoML with ensemble method shows robust results on two datasets using efficiently GPU clusters during both the training phase and the inference phase.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge