A Comparison of Reinforcement Learning Techniques for Fuzzy Cloud Auto-Scaling

Paper and Code

May 19, 2017

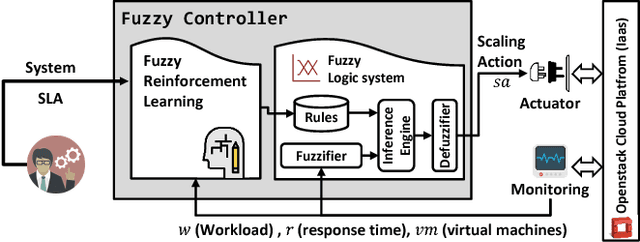

A goal of cloud service management is to design self-adaptable auto-scaler to react to workload fluctuations and changing the resources assigned. The key problem is how and when to add/remove resources in order to meet agreed service-level agreements. Reducing application cost and guaranteeing service-level agreements (SLAs) are two critical factors of dynamic controller design. In this paper, we compare two dynamic learning strategies based on a fuzzy logic system, which learns and modifies fuzzy scaling rules at runtime. A self-adaptive fuzzy logic controller is combined with two reinforcement learning (RL) approaches: (i) Fuzzy SARSA learning (FSL) and (ii) Fuzzy Q-learning (FQL). As an off-policy approach, Q-learning learns independent of the policy currently followed, whereas SARSA as an on-policy always incorporates the actual agent's behavior and leads to faster learning. Both approaches are implemented and compared in their advantages and disadvantages, here in the OpenStack cloud platform. We demonstrate that both auto-scaling approaches can handle various load traffic situations, sudden and periodic, and delivering resources on demand while reducing operating costs and preventing SLA violations. The experimental results demonstrate that FSL and FQL have acceptable performance in terms of adjusted number of virtual machine targeted to optimize SLA compliance and response time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge