A causal model of safety assurance for machine learning

Paper and Code

Jan 14, 2022

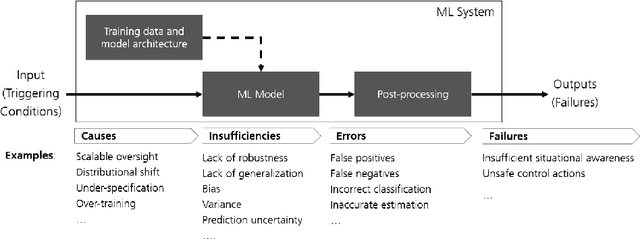

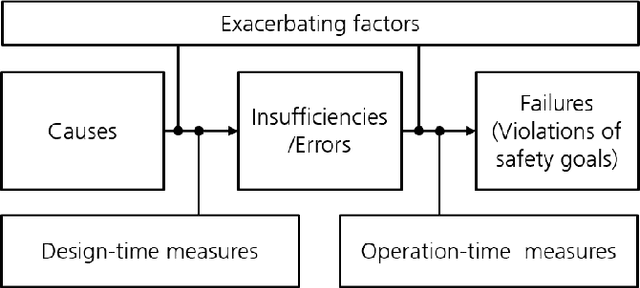

This paper proposes a framework based on a causal model of safety upon which effective safety assurance cases for ML-based applications can be built. In doing so, we build upon established principles of safety engineering as well as previous work on structuring assurance arguments for ML. The paper defines four categories of safety case evidence and a structured analysis approach within which these evidences can be effectively combined. Where appropriate, abstract formalisations of these contributions are used to illustrate the causalities they evaluate, their contributions to the safety argument and desirable properties of the evidences. Based on the proposed framework, progress in this area is re-evaluated and a set of future research directions proposed in order for tangible progress in this field to be made.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge