A breakthrough in Speech emotion recognition using Deep Retinal Convolution Neural Networks

Paper and Code

Jul 12, 2017

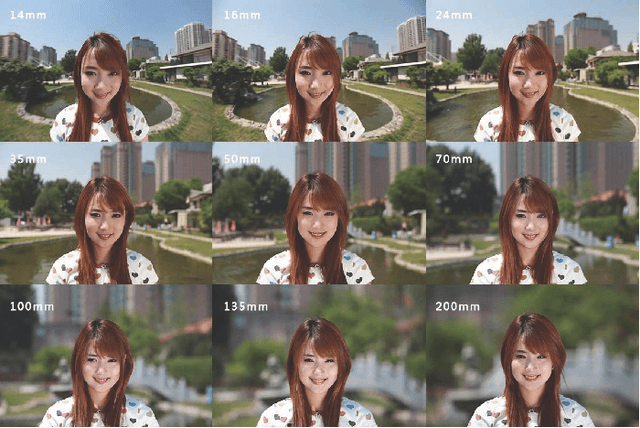

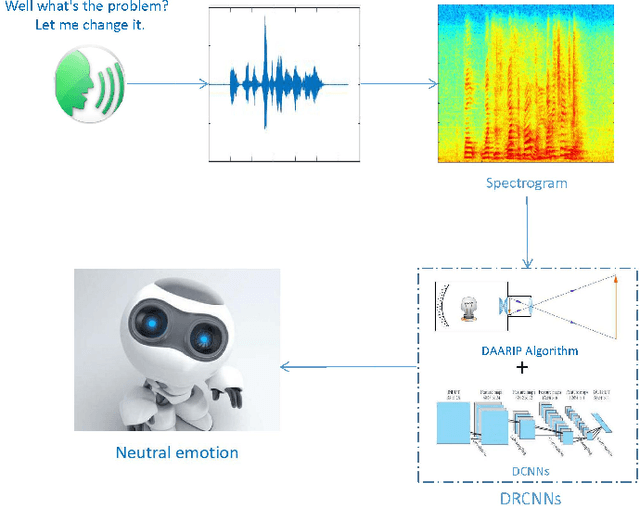

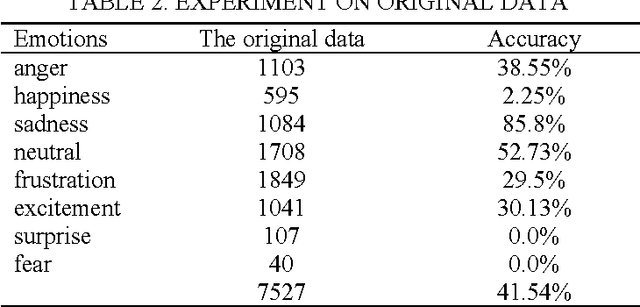

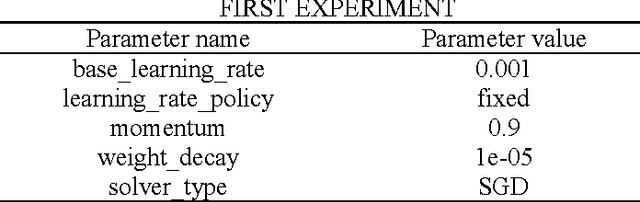

Speech emotion recognition (SER) is to study the formation and change of speaker's emotional state from the speech signal perspective, so as to make the interaction between human and computer more intelligent. SER is a challenging task that has encountered the problem of less training data and low prediction accuracy. Here we propose a data augmentation algorithm based on the imaging principle of the retina and convex lens, to acquire the different sizes of spectrogram and increase the amount of training data by changing the distance between the spectrogram and the convex lens. Meanwhile, with the help of deep learning to get the high-level features, we propose the Deep Retinal Convolution Neural Networks (DRCNNs) for SER and achieve the average accuracy over 99%. The experimental results indicate that DRCNNs outperforms the previous studies in terms of both the number of emotions and the accuracy of recognition. Predictably, our results will dramatically improve human-computer interaction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge