Ziqi Jia

FEVO: Financial Knowledge Expansion and Reasoning Evolution for Large Language Models

Jul 09, 2025Abstract:Advancements in reasoning for large language models (LLMs) have lead to significant performance improvements for LLMs in various fields such as mathematics and programming. However, research applying these advances to the financial domain, where considerable domain-specific knowledge is necessary to complete tasks, remains limited. To address this gap, we introduce FEVO (Financial Evolution), a multi-stage enhancement framework developed to enhance LLM performance in the financial domain. FEVO systemically enhances LLM performance by using continued pre-training (CPT) to expand financial domain knowledge, supervised fine-tuning (SFT) to instill structured, elaborate reasoning patterns, and reinforcement learning (RL) to further integrate the expanded financial domain knowledge with the learned structured reasoning. To ensure effective and efficient training, we leverage frontier reasoning models and rule-based filtering to curate FEVO-Train, high-quality datasets specifically designed for the different post-training phases. Using our framework, we train the FEVO series of models - C32B, S32B, R32B - from Qwen2.5-32B and evaluate them on seven benchmarks to assess financial and general capabilities, with results showing that FEVO-R32B achieves state-of-the-art performance on five financial benchmarks against much larger models as well as specialist models. More significantly, FEVO-R32B demonstrates markedly better performance than FEVO-R32B-0 (trained from Qwen2.5-32B-Instruct using only RL), thus validating the effectiveness of financial domain knowledge expansion and structured, logical reasoning distillation

Hierarchical-Task-Aware Multi-modal Mixture of Incremental LoRA Experts for Embodied Continual Learning

Jun 05, 2025

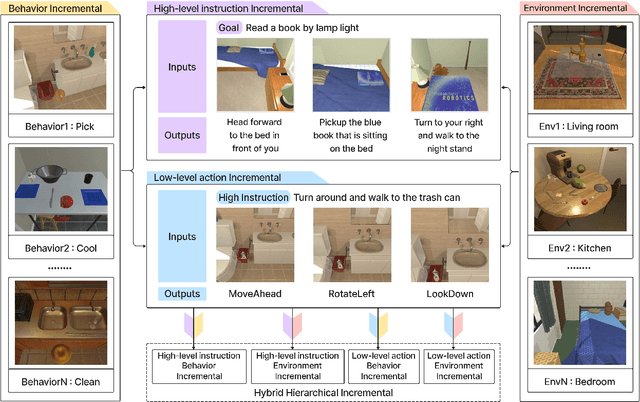

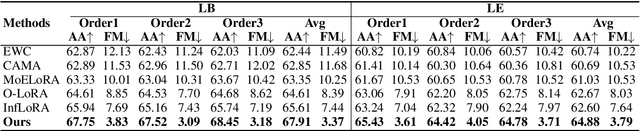

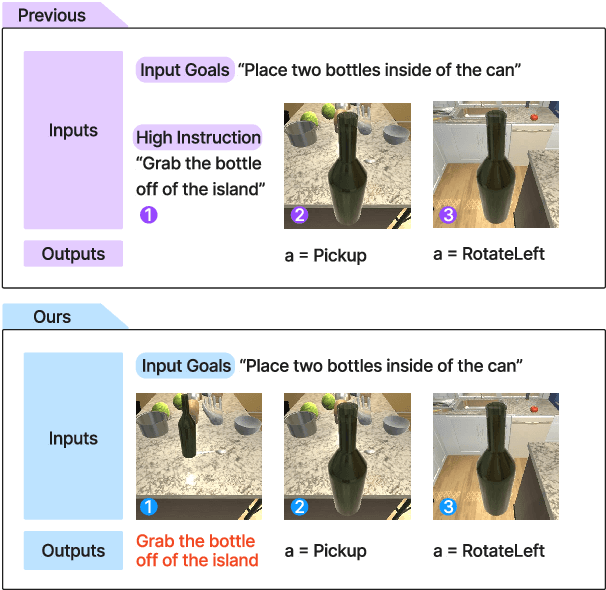

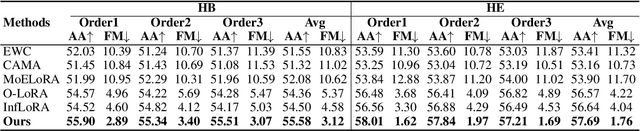

Abstract:Previous continual learning setups for embodied intelligence focused on executing low-level actions based on human commands, neglecting the ability to learn high-level planning and multi-level knowledge. To address these issues, we propose the Hierarchical Embodied Continual Learning Setups (HEC) that divide the agent's continual learning process into two layers: high-level instructions and low-level actions, and define five embodied continual learning sub-setups. Building on these setups, we introduce the Task-aware Mixture of Incremental LoRA Experts (Task-aware MoILE) method. This approach achieves task recognition by clustering visual-text embeddings and uses both a task-level router and a token-level router to select the appropriate LoRA experts. To effectively address the issue of catastrophic forgetting, we apply Singular Value Decomposition (SVD) to the LoRA parameters obtained from prior tasks, preserving key components while orthogonally training the remaining parts. The experimental results show that our method stands out in reducing the forgetting of old tasks compared to other methods, effectively supporting agents in retaining prior knowledge while continuously learning new tasks.

Enhancing Multi-Agent Systems via Reinforcement Learning with LLM-based Planner and Graph-based Policy

Mar 13, 2025Abstract:Multi-agent systems (MAS) have shown great potential in executing complex tasks, but coordination and safety remain significant challenges. Multi-Agent Reinforcement Learning (MARL) offers a promising framework for agent collaboration, but it faces difficulties in handling complex tasks and designing reward functions. The introduction of Large Language Models (LLMs) has brought stronger reasoning and cognitive abilities to MAS, but existing LLM-based systems struggle to respond quickly and accurately in dynamic environments. To address these challenges, we propose LLM-based Graph Collaboration MARL (LGC-MARL), a framework that efficiently combines LLMs and MARL. This framework decomposes complex tasks into executable subtasks and achieves efficient collaboration among multiple agents through graph-based coordination. Specifically, LGC-MARL consists of two main components: an LLM planner and a graph-based collaboration meta policy. The LLM planner transforms complex task instructions into a series of executable subtasks, evaluates the rationality of these subtasks using a critic model, and generates an action dependency graph. The graph-based collaboration meta policy facilitates communication and collaboration among agents based on the action dependency graph, and adapts to new task environments through meta-learning. Experimental results on the AI2-THOR simulation platform demonstrate the superior performance and scalability of LGC-MARL in completing various complex tasks.

Incremental Label Distribution Learning with Scalable Graph Convolutional Networks

Nov 20, 2024

Abstract:Label Distribution Learning (LDL) is an effective approach for handling label ambiguity, as it can analyze all labels at once and indicate the extent to which each label describes a given sample. Most existing LDL methods consider the number of labels to be static. However, in various LDL-specific contexts (e.g., disease diagnosis), the label count grows over time (such as the discovery of new diseases), a factor that existing methods overlook. Learning samples with new labels directly means learning all labels at once, thus wasting more time on the old labels and even risking overfitting the old labels. At the same time, learning new labels by the LDL model means reconstructing the inter-label relationships. How to make use of constructed relationships is also a crucial challenge. To tackle these challenges, we introduce Incremental Label Distribution Learning (ILDL), analyze its key issues regarding training samples and inter-label relationships, and propose Scalable Graph Label Distribution Learning (SGLDL) as a practical framework for implementing ILDL. Specifically, in SGLDL, we develop a New-label-aware Gradient Compensation Loss to speed up the learning of new labels and represent inter-label relationships as a graph to reduce the time required to reconstruct inter-label relationships. Experimental results on the classical LDL dataset show the clear advantages of unique algorithms and illustrate the importance of a dedicated design for the ILDL problem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge