Xuefeng Wei

CArtBench: Evaluating Vision-Language Models on Chinese Art Understanding, Interpretation, and Authenticity

Apr 13, 2026Abstract:We introduce CARTBENCH, a museum-grounded benchmark for evaluating vision-language models (VLMs) on Chinese artworks beyond short-form recognition and QA. CARTBENCH comprises four subtasks: CURATORQA for evidence-grounded recognition and reasoning, CATALOGCAPTION for structured four-section expert-style appreciation, REINTERPRET for defensible reinterpretation with expert ratings, and CONNOISSEURPAIRS for diagnostic authenticity discrimination under visually similar confounds. CARTBENCH is built by aligning image-bearing Palace Museum objects from Wikidata with authoritative catalog pages, spanning five art categories across multiple dynasties. Across nine representative VLMs, we find that high overall CURATORQA accuracy can mask sharp drops on hard evidence linking and style-to-period inference; long-form appreciation remains far from expert references; and authenticity-oriented diagnostic discrimination stays near chance, underscoring the difficulty of connoisseur-level reasoning for current models.

FLDNet: A Foreground-Aware Network for Polyp Segmentation Leveraging Long-Distance Dependencies

Sep 12, 2023

Abstract:Given the close association between colorectal cancer and polyps, the diagnosis and identification of colorectal polyps play a critical role in the detection and surgical intervention of colorectal cancer. In this context, the automatic detection and segmentation of polyps from various colonoscopy images has emerged as a significant problem that has attracted broad attention. Current polyp segmentation techniques face several challenges: firstly, polyps vary in size, texture, color, and pattern; secondly, the boundaries between polyps and mucosa are usually blurred, existing studies have focused on learning the local features of polyps while ignoring the long-range dependencies of the features, and also ignoring the local context and global contextual information of the combined features. To address these challenges, we propose FLDNet (Foreground-Long-Distance Network), a Transformer-based neural network that captures long-distance dependencies for accurate polyp segmentation. Specifically, the proposed model consists of three main modules: a pyramid-based Transformer encoder, a local context module, and a foreground-Aware module. Multilevel features with long-distance dependency information are first captured by the pyramid-based transformer encoder. On the high-level features, the local context module obtains the local characteristics related to the polyps by constructing different local context information. The coarse map obtained by decoding the reconstructed highest-level features guides the feature fusion process in the foreground-Aware module of the high-level features to achieve foreground enhancement of the polyps. Our proposed method, FLDNet, was evaluated using seven metrics on common datasets and demonstrated superiority over state-of-the-art methods on widely-used evaluation measures.

Feature Aggregation Network for Building Extraction from High-resolution Remote Sensing Images

Sep 12, 2023

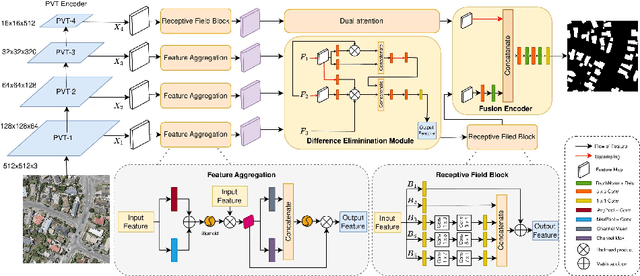

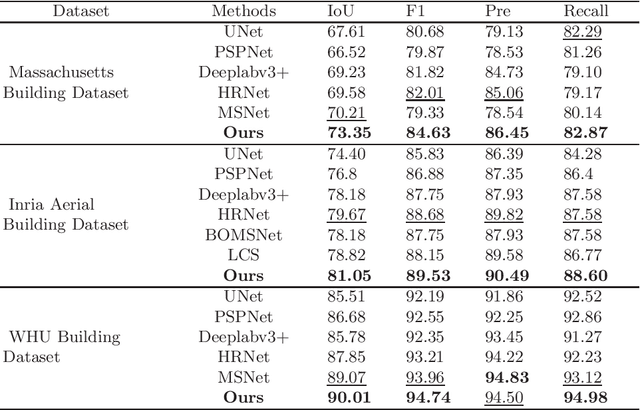

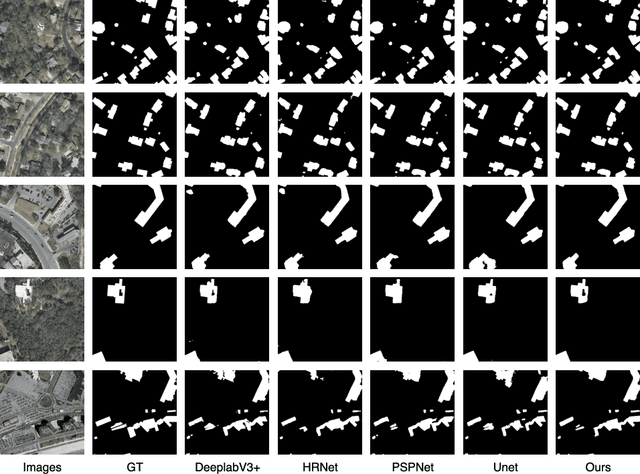

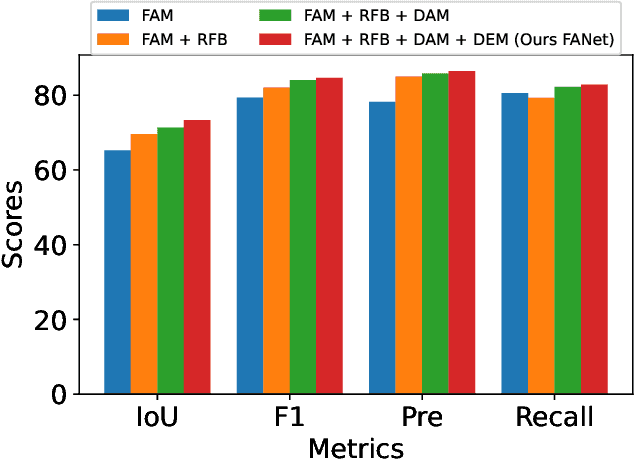

Abstract:The rapid advancement in high-resolution satellite remote sensing data acquisition, particularly those achieving submeter precision, has uncovered the potential for detailed extraction of surface architectural features. However, the diversity and complexity of surface distributions frequently lead to current methods focusing exclusively on localized information of surface features. This often results in significant intraclass variability in boundary recognition and between buildings. Therefore, the task of fine-grained extraction of surface features from high-resolution satellite imagery has emerged as a critical challenge in remote sensing image processing. In this work, we propose the Feature Aggregation Network (FANet), concentrating on extracting both global and local features, thereby enabling the refined extraction of landmark buildings from high-resolution satellite remote sensing imagery. The Pyramid Vision Transformer captures these global features, which are subsequently refined by the Feature Aggregation Module and merged into a cohesive representation by the Difference Elimination Module. In addition, to ensure a comprehensive feature map, we have incorporated the Receptive Field Block and Dual Attention Module, expanding the receptive field and intensifying attention across spatial and channel dimensions. Extensive experiments on multiple datasets have validated the outstanding capability of FANet in extracting features from high-resolution satellite images. This signifies a major breakthrough in the field of remote sensing image processing. We will release our code soon.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge