Tyler Ferguson

Scalable Derivative-Free Optimization for Nonlinear Least-Squares Problems

Aug 01, 2020

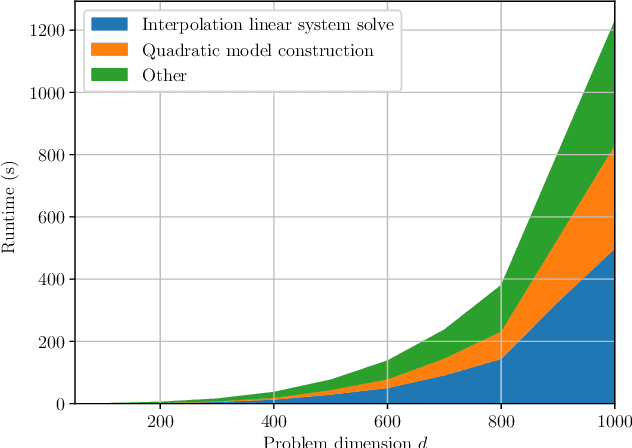

Abstract:Derivative-free - or zeroth-order - optimization (DFO) has gained recent attention for its ability to solve problems in a variety of application areas, including machine learning, particularly involving objectives which are stochastic and/or expensive to compute. In this work, we develop a novel model-based DFO method for solving nonlinear least-squares problems. We improve on state-of-the-art DFO by performing dimensionality reduction in the observational space using sketching methods, avoiding the construction of a full local model. Our approach has a per-iteration computational cost which is linear in problem dimension in a big data regime, and numerical evidence demonstrates that, compared to existing software, it has dramatically improved runtime performance on overdetermined least-squares problems.

* Fixed author spelling

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge