Tarun Srivastava

Creating a Large-scale Synthetic Dataset for Human Activity Recognition

Jul 21, 2020

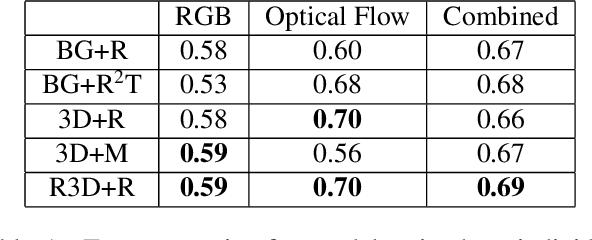

Abstract:Creating and labelling datasets of videos for use in training Human Activity Recognition models is an arduous task. In this paper, we approach this by using 3D rendering tools to generate a synthetic dataset of videos, and show that a classifier trained on these videos can generalise to real videos. We use five different augmentation techniques to generate the videos, leading to a wide variety of accurately labelled unique videos. We fine tune a pre-trained I3D model on our videos, and find that the model is able to achieve a high accuracy of 73% on the HMDB51 dataset over three classes. We also find that augmenting the HMDB training set with our dataset provides a 2% improvement in the performance of the classifier. Finally, we discuss possible extensions to the dataset, including virtual try on and modeling motion of the people.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge