Sheng-Hsuan Lin

Distributive Pre-Training of Generative Modeling Using Matrix-Product States

Jun 26, 2023

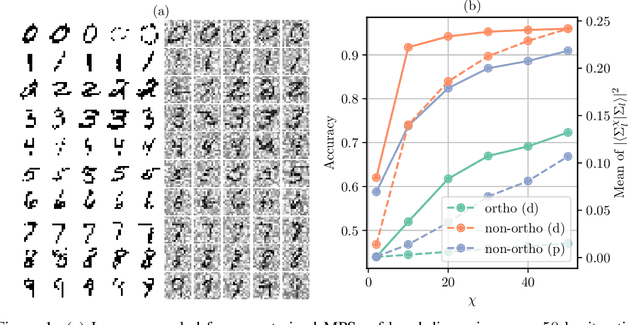

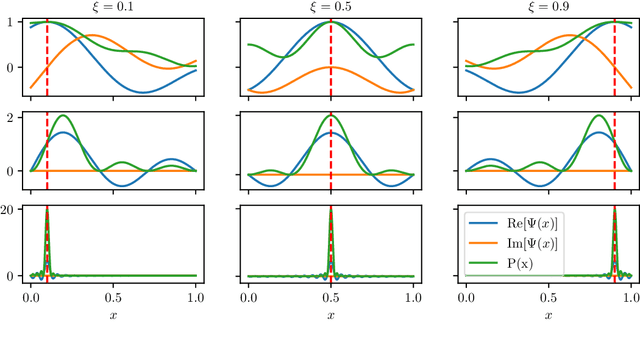

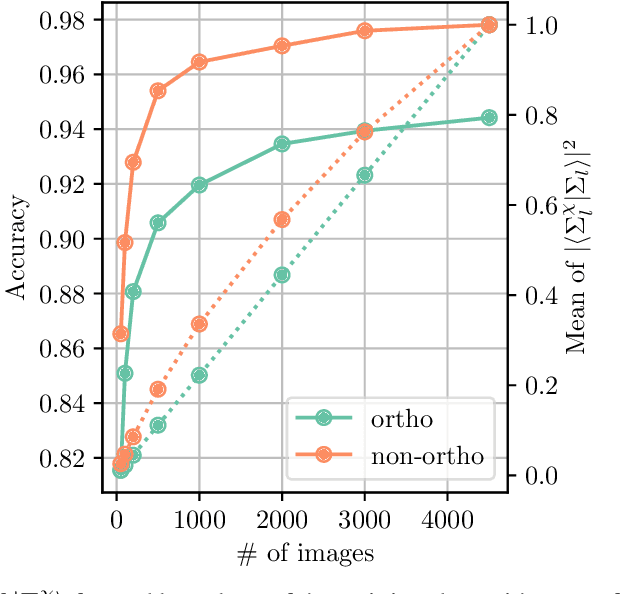

Abstract:Tensor networks have recently found applications in machine learning for both supervised learning and unsupervised learning. The most common approaches for training these models are gradient descent methods. In this work, we consider an alternative training scheme utilizing basic tensor network operations, e.g., summation and compression. The training algorithm is based on compressing the superposition state constructed from all the training data in product state representation. The algorithm could be parallelized easily and only iterates through the dataset once. Hence, it serves as a pre-training algorithm. We benchmark the algorithm on the MNIST dataset and show reasonable results for generating new images and classification tasks. Furthermore, we provide an interpretation of the algorithm as a compressed quantum kernel density estimation for the probability amplitude of input data.

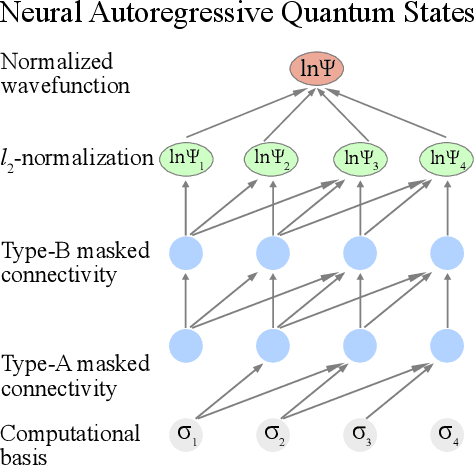

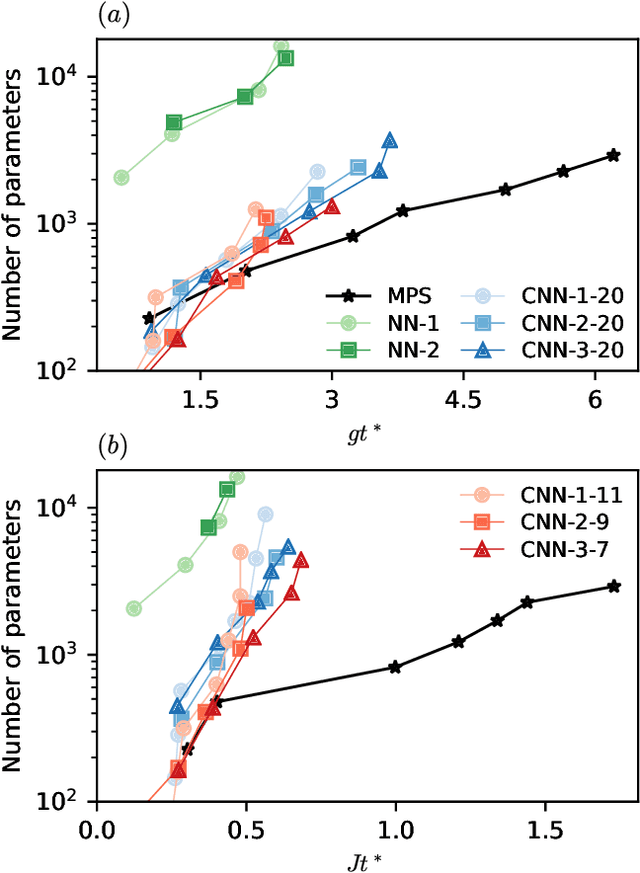

Scaling of neural-network quantum states for time evolution

Apr 21, 2021

Abstract:Simulating quantum many-body dynamics on classical computers is a challenging problem due to the exponential growth of the Hilbert space. Artificial neural networks have recently been introduced as a new tool to approximate quantum-many body states. We benchmark the variational power of different shallow and deep neural autoregressive quantum states to simulate global quench dynamics of a non-integrable quantum Ising chain. We find that the number of parameters required to represent the quantum state at a given accuracy increases exponentially in time. The growth rate is only slightly affected by the network architecture over a wide range of different design choices: shallow and deep networks, small and large filter sizes, dilated and normal convolutions, with and without shortcut connections.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge