Sang Jun Lee

Phase-shifted remote photoplethysmography for estimating heart rate and blood pressure from facial video

Jan 09, 2024Abstract:Human health can be critically affected by cardiovascular diseases, such as hypertension, arrhythmias, and stroke. Heart rate and blood pressure are important biometric information for the monitoring of cardiovascular system and early diagnosis of cardiovascular diseases. Existing methods for estimating the heart rate are based on electrocardiography and photoplethyomography, which require contacting the sensor to the skin surface. Moreover, catheter and cuff-based methods for measuring blood pressure cause inconvenience and have limited applicability. Therefore, in this thesis, we propose a vision-based method for estimating the heart rate and blood pressure. This thesis proposes a 2-stage deep learning framework consisting of a dual remote photoplethysmography network (DRP-Net) and bounded blood pressure network (BBP-Net). In the first stage, DRP-Net infers remote photoplethysmography (rPPG) signals for the acral and facial regions, and these phase-shifted rPPG signals are utilized to estimate the heart rate. In the second stage, BBP-Net integrates temporal features and analyzes phase discrepancy between the acral and facial rPPG signals to estimate SBP and DBP values. To improve the accuracy of estimating the heart rate, we employed a data augmentation method based on a frame interpolation model. Moreover, we designed BBP-Net to infer blood pressure within a predefined range by incorporating a scaled sigmoid function. Our method resulted in estimating the heart rate with the mean absolute error (MAE) of 1.78 BPM, reducing the MAE by 34.31 % compared to the recent method, on the MMSE-HR dataset. The MAE for estimating the systolic blood pressure (SBP) and diastolic blood pressure (DBP) were 10.19 mmHg and 7.09 mmHg. On the V4V dataset, the MAE for the heart rate, SBP, and DBP were 3.83 BPM, 13.64 mmHg, and 9.4 mmHg, respectively.

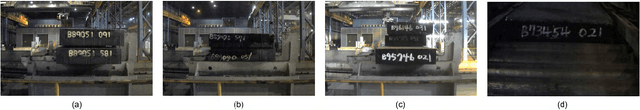

Selective Distillation of Weakly Annotated GTD for Vision-based Slab Identification System

Oct 09, 2018

Abstract:This paper proposes an algorithm for recognizing slab identification numbers in factory scenes. In the development a deep-learning based system, manual labeling for preparing ground truth data (GTD) is an important but expensive task. Furthermore, the quality of GTD is closely related to the performance of a supervised learning algorithm. To reduce manual work in labeling process, we generated weakly annotated GTD by marking only character centroids. Whereas conventional GTD for scene text recognition, bounding-boxes, require at least a drag-and-drop operation or two clicks to annotate a character location, the weakly annotated GTD requires a single click to record a character location. The main contribution of this paper is on selective distillation to improve the quality of the weakly annotated GTD. Because manual GTD are usually generated by many people, it may contain personal bias or human error. To address this problem, the information in manual GTD is integrated and refined by selective distillation. In the process of selective distillation, a fully convolutional network (FCN) is trained using the weakly annotated GTD, and its prediction maps are selectively used to revise locations and boundaries of semantic regions of characters in the initial GTD. The modified GTD are used in main training stage, and a post-processing is conducted to retrieve text information. Experiments were thoroughly conducted on actual industry data collected at a steelworks to demonstrate the effectiveness of the proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge