Samir Otmane

IBISC

Real-Time Indoor Object Detection based on hybrid CNN-Transformer Approach

Sep 03, 2024

Abstract:Real-time object detection in indoor settings is a challenging area of computer vision, faced with unique obstacles such as variable lighting and complex backgrounds. This field holds significant potential to revolutionize applications like augmented and mixed realities by enabling more seamless interactions between digital content and the physical world. However, the scarcity of research specifically fitted to the intricacies of indoor environments has highlighted a clear gap in the literature. To address this, our study delves into the evaluation of existing datasets and computational models, leading to the creation of a refined dataset. This new dataset is derived from OpenImages v7, focusing exclusively on 32 indoor categories selected for their relevance to real-world applications. Alongside this, we present an adaptation of a CNN detection model, incorporating an attention mechanism to enhance the model's ability to discern and prioritize critical features within cluttered indoor scenes. Our findings demonstrate that this approach is not just competitive with existing state-of-the-art models in accuracy and speed but also opens new avenues for research and application in the field of real-time indoor object detection.

Vision-based Pose Estimation for Augmented Reality : A Comparison Study

Jun 25, 2018

Abstract:Augmented reality aims to enrich our real world by inserting 3D virtual objects. In order to accomplish this goal, it is important that virtual elements are rendered and aligned in the real scene in an accurate and visually acceptable way. The solution of this problem can be related to a pose estimation and 3D camera localization. This paper presents a survey on different approaches of 3D pose estimation in augmented reality and gives classification of key-points-based techniques. The study given in this paper may help both developers and researchers in the field of augmented reality.

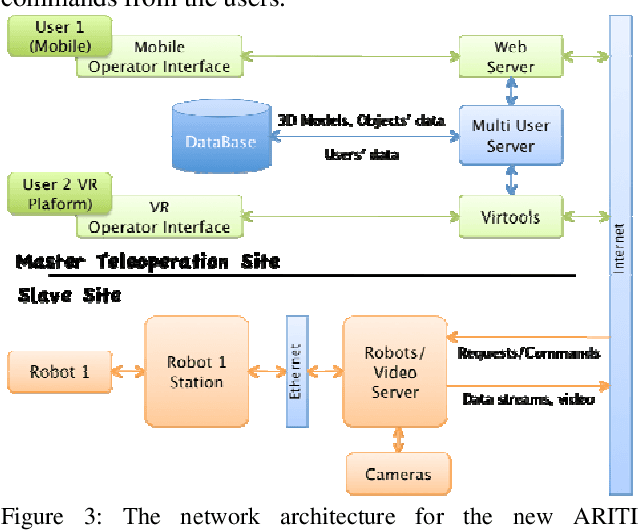

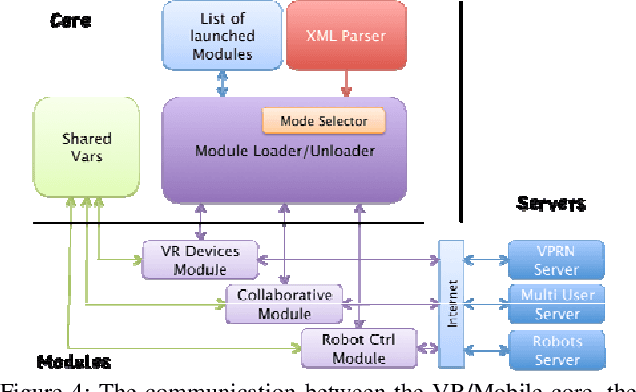

A Distributed Software Architecture for Collaborative Teleoperation based on a VR Platform and Web Application Interoperability

Apr 14, 2009

Abstract:Augmented Reality and Virtual Reality can provide to a Human Operator (HO) a real help to complete complex tasks, such as robot teleoperation and cooperative teleassistance. Using appropriate augmentations, the HO can interact faster, safer and easier with the remote real world. In this paper, we present an extension of an existing distributed software and network architecture for collaborative teleoperation based on networked human-scaled mixed reality and mobile platform. The first teleoperation system was composed by a VR application and a Web application. However the 2 systems cannot be used together and it is impossible to control a distant robot simultaneously. Our goal is to update the teleoperation system to permit a heterogeneous collaborative teleoperation between the 2 platforms. An important feature of this interface is based on different Mobile platforms to control one or many robots.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge