Saad Alqithami

Endogenous Information in Routing Games: Memory-Constrained Equilibria, Recall Braess Paradoxes, and Memory Design

Apr 13, 2026Abstract:We study routing games in which travelers optimize over routes that are remembered or surfaced, rather than over a fixed exogenous action set. The paper develops a tractable design theory for endogenous recall and then connects it back to an explicit finite-memory micro model. At the micro level, each traveler carries a finite memory state, receives surfaced alternatives, chooses via a logit rule, and updates memory under a policy such as LRU. This yields a stationary Forgetful Wardrop Equilibrium (FWE); existence is proved under mild regularity, and uniqueness follows in a contraction regime for the reduced fixed-point map. The paper's main design layer is a stationary salience model that summarizes persistent memory and interface effects as route-specific weights. Salience-weighted stochastic user equilibrium is the unique minimizer of a strictly convex potential, which yields a clean optimization and implementability theory. In this layer we characterize governed implementability under ratio budgets and affine tying constraints, and derive constructive algorithms on parallel and series-parallel networks. The bridge between layers is exact for last-choice memory (B=1): the micro model is then equivalent to the salience model, so any interior salience vector can be realized by an appropriate surfacing policy. For larger memories, we develop an explicit LRU-to-TTL-to-salience approximation pipeline and add contraction-based bounds that translate surrogate-map error into fixed-point and welfare error. Finally, we define a Recall Braess Paradox, in which improving recall increases equilibrium delay without changing physical capacity, and show that it can arise on every two-terminal network with at least two distinct s-t paths. Targeted experiments support the approximation regime, governed-design predictions, and the computational advantages of the reduced layer.

Soft Tournament Equilibrium

Apr 07, 2026Abstract:The evaluation of general-purpose artificial agents, particularly those based on large language models, presents a significant challenge due to the non-transitive nature of their interactions. When agent A defeats B, B defeats C, and C defeats A, traditional ranking methods that force a linear ordering can be misleading and unstable. We argue that for such cyclic domains, the fundamental object of evaluation should not be a ranking but a set-valued core, as conceptualized in classical tournament theory. This paper introduces Soft Tournament Equilibrium (STE), a differentiable framework for learning and computing set-valued tournament solutions directly from pairwise comparison data. STE first learns a probabilistic tournament model, potentially conditioned on rich contextual information. It then employs novel, differentiable operators for soft reachability and soft covering to compute continuous analogues of two seminal tournament solutions: the Top Cycle and the Uncovered Set. The output is a set of core agents, each with a calibrated membership score, providing a nuanced and robust assessment of agent capabilities. We develop the theoretical foundation for STE to prove its consistency with classical solutions in the zero-temperature limit, which establishes its Condorcet-inclusion properties, and analyzing its stability and sample complexity. We specify an experimental protocol for validating STE on both synthetic and real-world benchmarks. This work aims to provide a complete, standalone treatise that re-centers general-agent evaluation on a more appropriate and robust theoretical foundation, moving from unstable rankings to stable, set-valued equilibria.

DC-Ada: Reward-Only Decentralized Observation-Interface Adaptation for Heterogeneous Multi-Robot Teams

Apr 05, 2026Abstract:Heterogeneity is a defining feature of deployed multi-robot teams: platforms often differ in sensing modalities, ranges, fields of view, and failure patterns. Controllers trained under nominal sensing can degrade sharply when deployed on robots with missing or mismatched sensors, even when the task and action interface are unchanged. We present DC-Ada, a reward-only decentralized adaptation method that keeps a pretrained shared policy frozen and instead adapts compact per-robot observation transforms to map heterogeneous sensing into a fixed inference interface. DC-Ada is gradient-free and communication-minimal: it uses budgeted accept/reject random search with short common-random-number rollouts under a strict step budget. We evaluate DC-Ada against four baselines in a deterministic 2D multi-robot simulator covering warehouse logistics, search and rescue, and collaborative mapping, across four heterogeneity regimes (H0--H3) and five seeds with a matched budget of $200{,}000$ joint environment steps per run. Results show that heterogeneity can substantially degrade a frozen shared policy and that no single mitigation dominates across all tasks and metrics. Observation normalization is strongest for reward robustness in warehouse logistics and competitive in search and rescue, while the frozen shared policy is strongest for reward in collaborative mapping. DC-Ada offers a useful complementary operating point: it improves completion most clearly in severe coverage-based mapping while requiring only scalar team returns and no policy fine-tuning or persistent communication. These results position DC-Ada as a practical deploy-time adaptation method for heterogeneous teams.

Latency-Aware Resource Allocation over Heterogeneous Networks: A Lorentz-Invariant Market Mechanism

Apr 04, 2026Abstract:We present a telecom-native auction mechanism for allocating bandwidth and time slots across heterogeneous-delay networks, ranging from low-Earth-orbit (LEO) satellite constellations to delay-tolerant deep-space relays. The Lorentz-Invariant Auction (LIA) treats bids as spacetime events and reweights reported values based on the \emph{horizon slack}, a causal quantity derived from the earliest-arrival times relative to a public clearing horizon. Unlike other delay-equalization rules, LIA combines a causal-ordering formulation, a uniquely exponential slack correction implied by a semigroup-style invariance axiom, and a critical-value implementation that ensures truthful reported values once slacks are fixed by trusted infrastructure. We analyze the incentive result in the exogenous-slack regime and separately examine bounded slack-estimation error and endogenous-delay limitations. Under fixed feasible slacks, LIA is individually rational and achieves welfare at least \(e^{-λΔ}\) relative to the optimal feasible allocation, where \(Δ\) is the slack spread. We evaluate LIA on STARLINK-200, INTERNET-100, and DSN-30 across 52,500 baseline instances with market sizes \(n\in\{10,20,30,40,50\}\) and conduct additional robustness sweeps. On Starlink and Internet, LIA maintains near-efficiency while eliminating measured timing rents. However, on DSN, welfare is lower in thin markets but improves with depth. We also distinguish winner-determination time from the background cost of maintaining slack estimates and study robustness beyond independent and identically distributed (iid) noise through error-spread bounds and structured (distance-biased and subnetwork-correlated) noise models. These results suggest that causal-consistent mechanism design offers a practical non-buffering alternative to synchronized delay equalization in heterogeneous telecom infrastructures.

EcoFair-CH-MARL: Scalable Constrained Hierarchical Multi-Agent RL with Real-Time Emission Budgets and Fairness Guarantees

Mar 15, 2026Abstract:Global decarbonisation targets and tightening market pressures demand maritime logistics solutions that are simultaneously efficient, sustainable, and equitable. We introduce EcoFair-CH-MARL, a constrained hierarchical multi-agent reinforcement learning framework that unifies three innovations: (i) a primal-dual budget layer that provably bounds cumulative emissions under stochastic weather and demand; (ii) a fairness-aware reward transformer with dynamically scheduled penalties that enforces max-min cost equity across heterogeneous fleets; and (iii) a two-tier policy architecture that decouples strategic routing from real-time vessel control, enabling linear scaling in agent count. New theoretical results establish O(\sqrt{T}) regret for both constraint violations and fairness loss. Experiments on a high-fidelity maritime digital twin (16 ports, 50 vessels) driven by automatic identification system traces, plus an energy-grid case study, show up to 15% lower emissions, 12% higher through-put, and a 45% fair-cost improvement over state-of-the-art hierarchical and constrained MARL baselines. In addition, EcoFair-CH-MARL achieves stronger equity (lower Gini and higher min-max welfare) than fairness-specific MARL baselines (e.g., SOTO, FEN), and its modular design is compatible with both policy- and value-based learners. EcoFair-CH-MARL therefore advances the feasibility of large-scale, regulation-compliant, and socially responsible multi-agent coordination in safety-critical domains.

* Conference: The 28th European Conference on Artificial Intelligence (ECAI)

The Observer-Situation Lattice: A Unified Formal Basis for Perspective-Aware Cognition

Mar 02, 2026Abstract:Autonomous agents operating in complex, multi-agent environments must reason about what is true from multiple perspectives. Existing approaches often struggle to integrate the reasoning of different agents, at different times, and in different contexts, typically handling these dimensions in separate, specialized modules. This fragmentation leads to a brittle and incomplete reasoning process, particularly when agents must understand the beliefs of others (Theory of Mind). We introduce the Observer-Situation Lattice (OSL), a unified mathematical structure that provides a single, coherent semantic space for perspective-aware cognition. OSL is a finite complete lattice where each element represents a unique observer-situation pair, allowing for a principled and scalable approach to belief management. We present two key algorithms that operate on this lattice: (i) Relativized Belief Propagation, an incremental update algorithm that efficiently propagates new information, and (ii) Minimal Contradiction Decomposition, a graph-based procedure that identifies and isolates contradiction components. We prove the theoretical soundness of our framework and demonstrate its practical utility through a series of benchmarks, including classic Theory of Mind tasks and a comparison with established paradigms such as assumption-based truth maintenance systems. Our results show that OSL provides a computationally efficient and expressive foundation for building robust, perspective-aware autonomous agents.

* Extended version of the AAMAS 2026 paper with the same title

Autonomous Agents on Blockchains: Standards, Execution Models, and Trust Boundaries

Jan 08, 2026Abstract:Advances in large language models have enabled agentic AI systems that can reason, plan, and interact with external tools to execute multi-step workflows, while public blockchains have evolved into a programmable substrate for value transfer, access control, and verifiable state transitions. Their convergence introduces a high-stakes systems challenge: designing standard, interoperable, and secure interfaces that allow agents to observe on-chain state, formulate transaction intents, and authorize execution without exposing users, protocols, or organizations to unacceptable security, governance, or economic risks. This survey systematizes the emerging landscape of agent-blockchain interoperability through a systematic literature review, identifying 317 relevant works from an initial pool of over 3000 records. We contribute a five-part taxonomy of integration patterns spanning read-only analytics, simulation and intent generation, delegated execution, autonomous signing, and multi-agent workflows; a threat model tailored to agent-driven transaction pipelines that captures risks ranging from prompt injection and policy misuse to key compromise, adversarial execution dynamics, and multi-agent collusion; and a comparative capability matrix analyzing more than 20 representative systems across 13 dimensions, including custody models, permissioning, policy enforcement, observability, and recovery. Building on the gaps revealed by this analysis, we outline a research roadmap centered on two interface abstractions: a Transaction Intent Schema for portable and unambiguous goal specification, and a Policy Decision Record for auditable, verifiable policy enforcement across execution environments. We conclude by proposing a reproducible evaluation suite and benchmarks for assessing the safety, reliability, and economic robustness of agent-mediated on-chain execution.

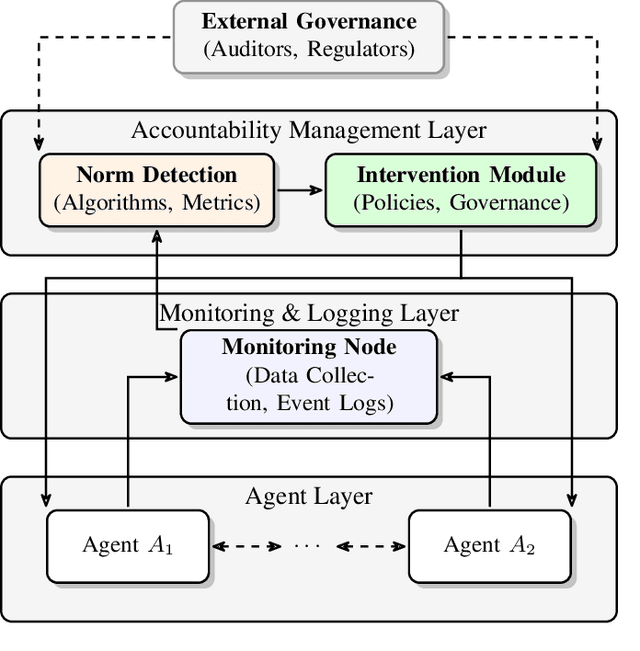

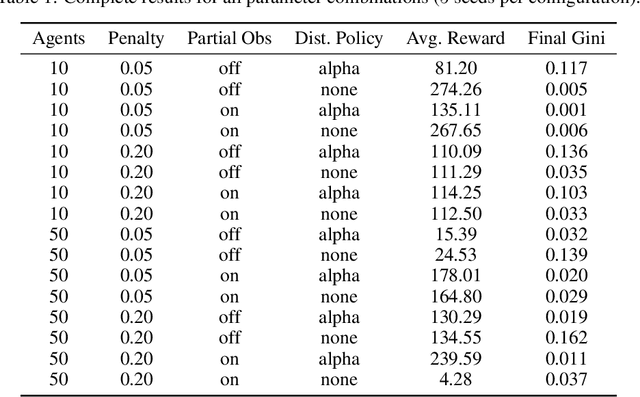

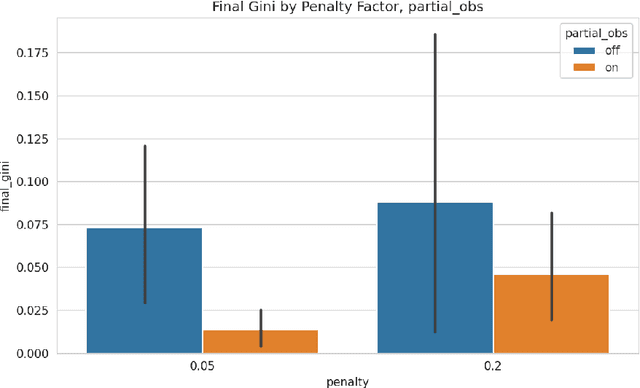

Adaptive Accountability in Networked MAS: Tracing and Mitigating Emergent Norms at Scale

Dec 21, 2025

Abstract:Large-scale networked multi-agent systems increasingly underpin critical infrastructure, yet their collective behavior can drift toward undesirable emergent norms that elude conventional governance mechanisms. We introduce an adaptive accountability framework that (i) continuously traces responsibility flows through a lifecycle-aware audit ledger, (ii) detects harmful emergent norms online via decentralized sequential hypothesis tests, and (iii) deploys local policy and reward-shaping interventions that realign agents with system-level objectives in near real time. We prove a bounded-compromise theorem showing that whenever the expected intervention cost exceeds an adversary's payoff, the long-run proportion of compromised interactions is bounded by a constant strictly less than one. Extensive high-performance simulations with up to 100 heterogeneous agents, partial observability, and stochastic communication graphs show that our framework prevents collusion and resource hoarding in at least 90% of configurations, boosts average collective reward by 12-18%, and lowers the Gini inequality index by up to 33% relative to a PPO baseline. These results demonstrate that a theoretically principled accountability layer can induce ethically aligned, self-regulating behavior in complex MAS without sacrificing performance or scalability.

Forgetful but Faithful: A Cognitive Memory Architecture and Benchmark for Privacy-Aware Generative Agents

Dec 14, 2025Abstract:As generative agents become increasingly sophisticated and deployed in long-term interactive scenarios, their memory management capabilities emerge as a critical bottleneck for both performance and privacy. Current approaches either maintain unlimited memory stores, leading to computational intractability and privacy concerns, or employ simplistic forgetting mechanisms that compromise agent coherence and functionality. This paper introduces the Memory-Aware Retention Schema (MaRS), a novel framework for human-centered memory management in generative agents, coupled with six theoretically-grounded forgetting policies that balance performance, privacy, and computational efficiency. We present the Forgetful but Faithful Agent (FiFA) benchmark, a comprehensive evaluation framework that assesses agent performance across narrative coherence, goal completion, social recall accuracy, privacy preservation, and cost efficiency. Through extensive experimentation involving 300 evaluation runs across multiple memory budgets and agent configurations, we demonstrate that our hybrid forgetting policy achieves superior performance (composite score: 0.911) while maintaining computational tractability and privacy guarantees. Our work establishes new benchmarks for memory-budgeted agent evaluation and provides practical guidelines for deploying generative agents in resource-constrained, privacy-sensitive environments. The theoretical foundations, implementation framework, and empirical results contribute to the emerging field of human-centered AI by addressing fundamental challenges in agent memory management that directly impact user trust, system scalability, and regulatory compliance.

Dynamic Homophily with Imperfect Recall: Modeling Resilience in Adversarial Networks

Dec 13, 2025Abstract:The purpose of this study is to investigate how homophily, memory constraints, and adversarial disruptions collectively shape the resilience and adaptability of complex networks. To achieve this, we develop a new framework that integrates explicit memory decay mechanisms into homophily-based models and systematically evaluate their performance across diverse graph structures and adversarial settings. Our methods involve extensive experimentation on synthetic datasets, where we vary decay functions, reconnection probabilities, and similarity measures, primarily comparing cosine similarity with traditional metrics such as Jaccard similarity and baseline edge weights. The results show that cosine similarity achieves up to a 30\% improvement in stability metrics in sparse, convex, and modular networks. Moreover, the refined value-of-recall metric demonstrates that strategic forgetting can bolster resilience by balancing network robustness and adaptability. The findings underscore the critical importance of aligning memory and similarity parameters with the structural and adversarial dynamics of the network. By quantifying the tangible benefits of incorporating memory constraints into homophily-based analyses, this study offers actionable insights for optimizing real-world applications, including social systems, collaborative platforms, and cybersecurity contexts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge