SP Arun

Brain-like emergent properties in deep networks: impact of network architecture, datasets and training

Nov 25, 2024

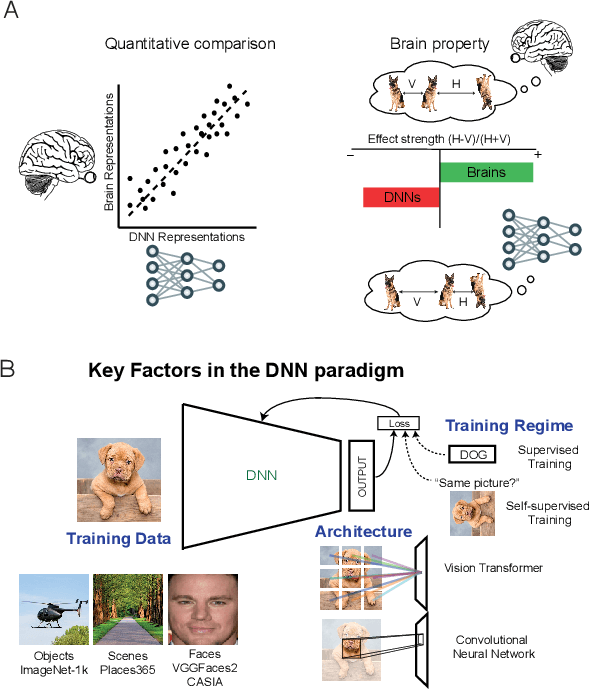

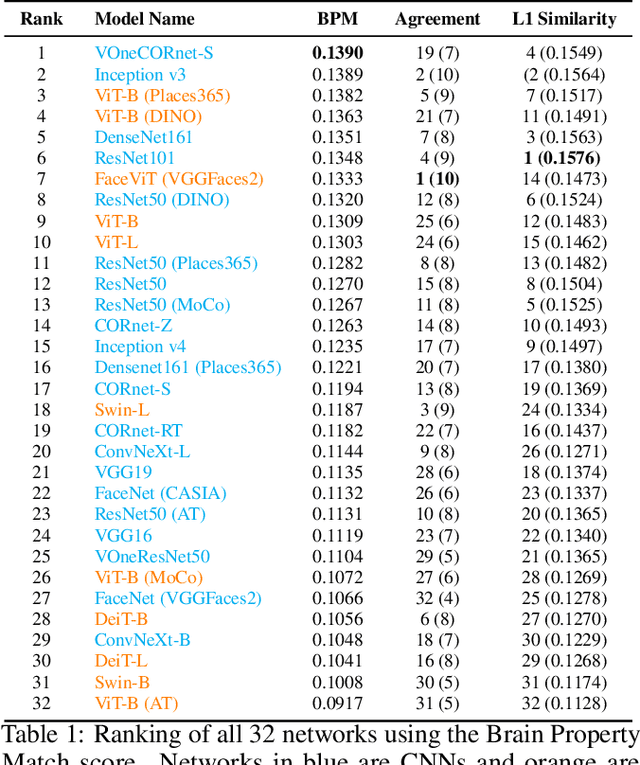

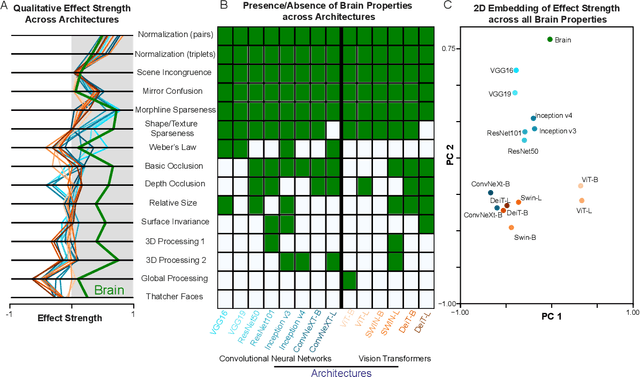

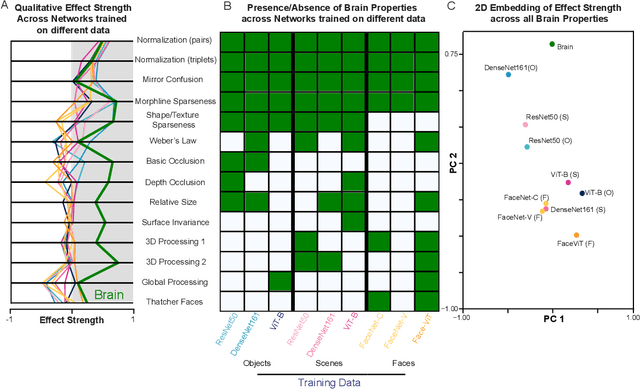

Abstract:Despite the rapid pace at which deep networks are improving on standardized vision benchmarks, they are still outperformed by humans on real-world vision tasks. This paradoxical lack of generalization could be addressed by making deep networks more brain-like. Although several benchmarks have compared the ability of deep networks to predict brain responses to natural images, they do not capture subtle but important brain-like emergent properties. To resolve this issue, we report several well-known perceptual and neural emergent properties that can be tested on deep networks. To evaluate how various design factors impact brain-like properties, we systematically evaluated over 30 state-of-the-art networks with varying network architectures, training datasets and training regimes. Our main findings are as follows. First, network architecture had the strongest impact on brain-like properties compared to dataset and training regime variations. Second, networks varied widely in their alignment to the brain with no single network outperforming all others. Taken together, our results complement existing benchmarks by revealing brain-like properties that are either emergent or lacking in state-of-the-art deep networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge