Renith G

Survival Analysis on Structured Data using Deep Reinforcement Learning

May 28, 2022

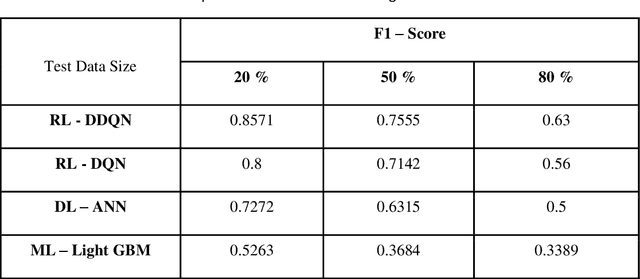

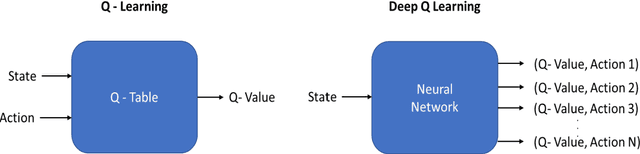

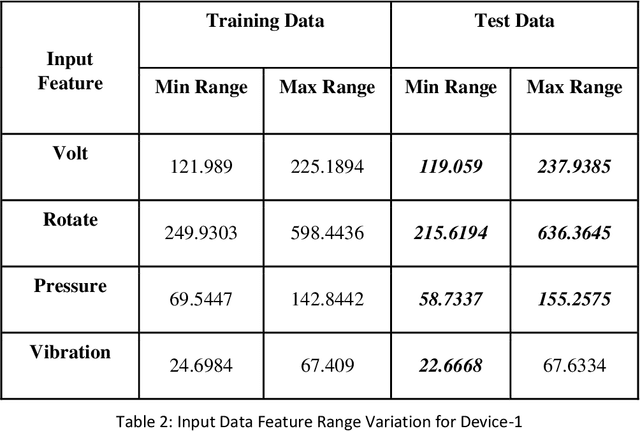

Abstract:Survival analysis is playing a major role in manufacturing sector by analyzing occurrence of any unwanted event based on the input data. Predictive maintenance, which is a part of survival analysis, helps to find any device failure based on the current incoming data from different sensor or any equipment. Deep learning techniques were used to automate the predictive maintenance problem to some extent, but they are not very helpful in predicting the device failure for the input data which the algorithm had not learned. Since neural network predicts the output based on previous learned input features, it cannot perform well when there is more variation in input features. Performance of the model is degraded with the occurrence of changes in input data and finally the algorithm fails in predicting the device failure. This problem can be solved by our proposed method where the algorithm can predict the device failure more precisely than the existing deep learning algorithms. The proposed solution involves implementation of Deep Reinforcement Learning algorithm called Double Deep Q Network (DDQN) for classifying the device failure based on the input features. The algorithm is capable of learning different variation of the input feature and is robust in predicting whether the device will fail or not based on the input data. The proposed DDQN model is trained with limited or lesser amount of input data. The trained model predicted larger amount of test data efficiently and performed well compared to other deep learning and machine learning models.

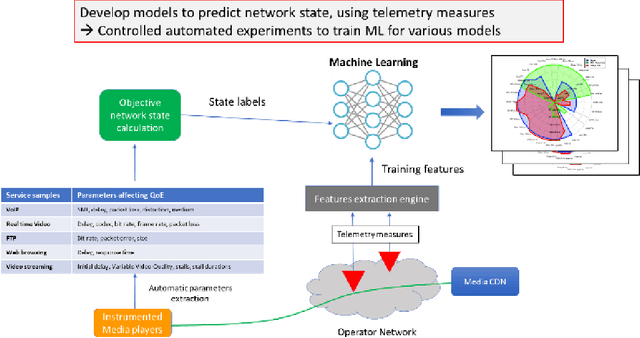

Network state Estimation using Raw Video Analysis: vQoS-GAN based non-intrusive Deep Learning Approach

Mar 22, 2022

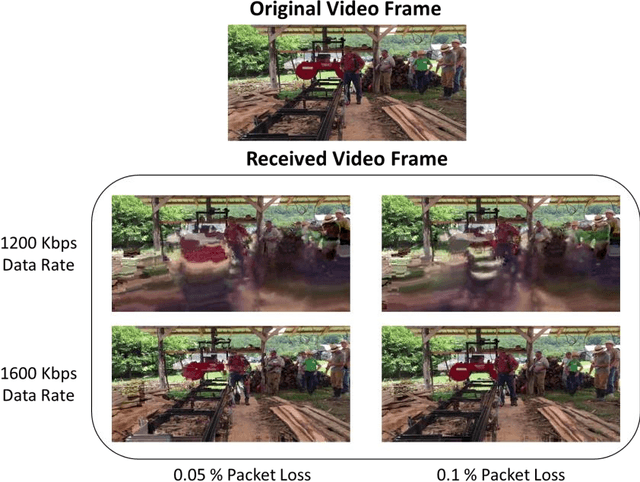

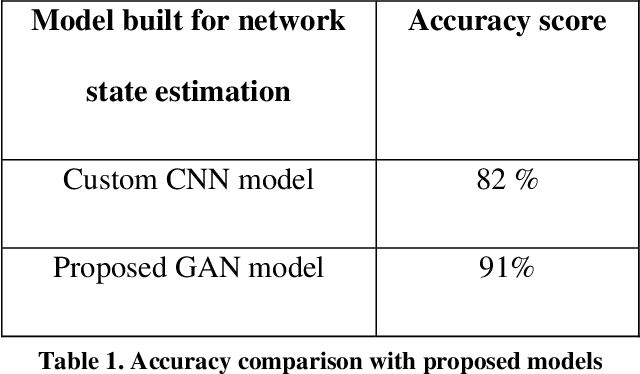

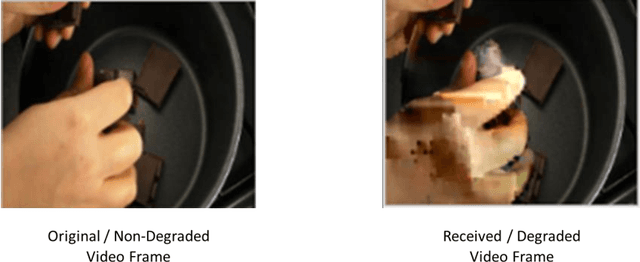

Abstract:Content based providers transmits real time complex signal such as video data from one region to another. During this transmission process, the signals usually end up distorted or degraded where the actual information present in the video is lost. This normally happens in the streaming video services applications. Hence there is a need to know the level of degradation that happened in the receiver side. This video degradation can be estimated by network state parameters like data rate and packet loss values. Our proposed solution vQoS GAN (video Quality of Service Generative Adversarial Network) can estimate the network state parameters from the degraded received video data using a deep learning approach of semi supervised generative adversarial network algorithm. A robust and unique design of deep learning network model has been trained with the video data along with data rate and packet loss class labels and achieves over 95 percent of training accuracy. The proposed semi supervised generative adversarial network can additionally reconstruct the degraded video data to its original form for a better end user experience.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge