Mirko Mazzoleni

A unified surrogate-based scheme for black-box and preference-based optimization

Feb 03, 2022

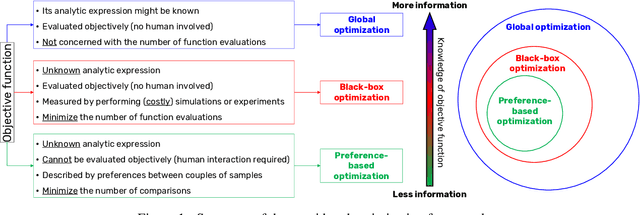

Abstract:Black-box and preference-based optimization algorithms are global optimization procedures that aim to find the global solutions of an optimization problem using, respectively, the least amount of function evaluations or sample comparisons as possible. In the black-box case, the analytical expression of the objective function is unknown and it can only be evaluated through a (costly) computer simulation or an experiment. In the preference-based case, the objective function is still unknown but it corresponds to the subjective criterion of an individual. So, it is not possible to quantify such criterion in a reliable and consistent way. Therefore, preference-based optimization algorithms seek global solutions using only comparisons between couples of different samples, for which a human decision-maker indicates which of the two is preferred. Quite often, the black-box and preference-based frameworks are covered separately and are handled using different techniques. In this paper, we show that black-box and preference-based optimization problems are closely related and can be solved using the same family of approaches, namely surrogate-based methods. Moreover, we propose the generalized Metric Response Surface (gMRS) algorithm, an optimization scheme that is a generalization of the popular MSRS framework. Finally, we provide a convergence proof for the proposed optimization method.

GLISp-r: A preference-based optimization algorithm with convergence guarantees

Feb 02, 2022

Abstract:Preference-based optimization algorithms are iterative procedures that seek the optimal value for a decision variable based only on comparisons between couples of different samples. At each iteration, a human decision-maker is asked to express a preference between two samples, highlighting which one, if any, is better than the other. The optimization procedure must use the observed preferences to find the value of the decision variable that is most preferred by the human decision-maker, while also minimizing the number of comparisons. In this work, we propose GLISp-r, an extension of a recent preference-based optimization procedure called GLISp. The latter uses a Radial Basis Function surrogate to describe the tastes of the individual. Iteratively, GLISp proposes new samples to compare with the current best candidate by trading off exploitation of the surrogate model and exploration of the decision space. In GLISp-r, we propose a different criterion to use when looking for a new candidate sample that is inspired by MSRS, a popular procedure in the black-box optimization framework (which is closely related to the preference-based one). Compared to GLISp, GLISp-r is less likely to get stuck on local optimizers of the preference-based optimization problem. We motivate this claim theoretically, with a proof of convergence, and empirically, by comparing the performances of GLISp and GLISp-r on different benchmark optimization problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge