Maxwell Meyer

BEN: Using Confidence-Guided Matting for Dichotomous Image Segmentation

Jan 08, 2025

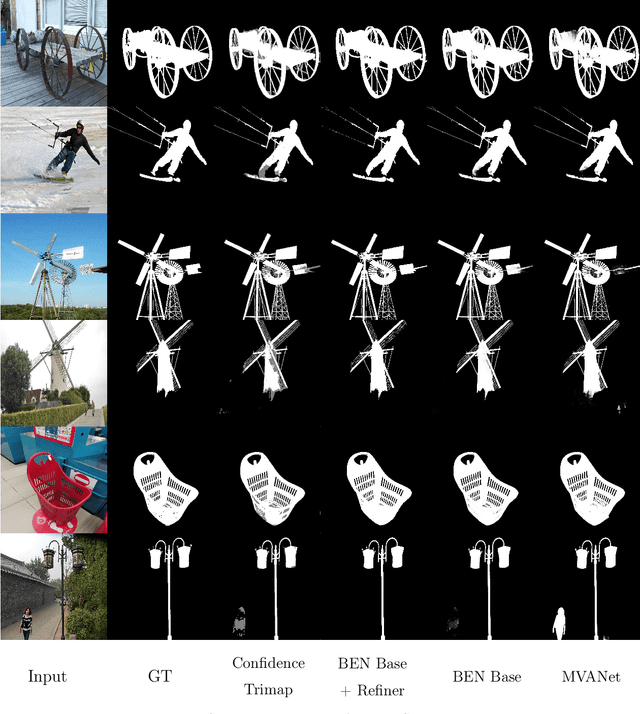

Abstract:Current approaches to dichotomous image segmentation (DIS) treat image matting and object segmentation as fundamentally different tasks. As improvements in image segmentation become increasingly challenging to achieve, combining image matting and grayscale segmentation techniques offers promising new directions for architectural innovation. Inspired by the possibility of aligning these two model tasks, we propose a new architectural approach for DIS called Confidence-Guided Matting (CGM). We created the first CGM model called Background Erase Network (BEN). BEN is comprised of two components: BEN Base for initial segmentation and BEN Refiner for confidence refinement. Our approach achieves substantial improvements over current state-of-the-art methods on the DIS5K validation dataset, demonstrating that matting-based refinement can significantly enhance segmentation quality. This work opens new possibilities for cross-pollination between matting and segmentation techniques in computer vision.

IO Transformer: Evaluating SwinV2-Based Reward Models for Computer Vision

Oct 31, 2024Abstract:Transformers and their derivatives have achieved state-of-the-art performance across text, vision, and speech recognition tasks. However, minimal effort has been made to train transformers capable of evaluating the output quality of other models. This paper examines SwinV2-based reward models, called the Input-Output Transformer (IO Transformer) and the Output Transformer. These reward models can be leveraged for tasks such as inference quality evaluation, data categorization, and policy optimization. Our experiments demonstrate highly accurate model output quality assessment across domains where the output is entirely dependent on the input, with the IO Transformer achieving perfect evaluation accuracy on the Change Dataset 25 (CD25). We also explore modified Swin V2 architectures. Ultimately Swin V2 remains on top with a score of 95.41 % on the IO Segmentation Dataset, outperforming the IO Transformer in scenarios where the output is not entirely dependent on the input. Our work expands the application of transformer architectures to reward modeling in computer vision and provides critical insights into optimizing these models for various tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge