Mahmood Azhar Qureshi

Phantom: A High-Performance Computational Core for Sparse Convolutional Neural Networks

Nov 09, 2021

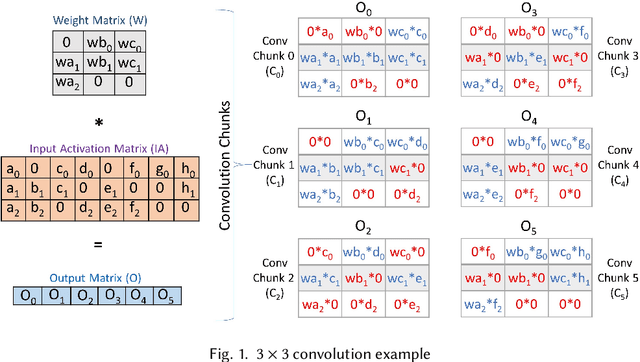

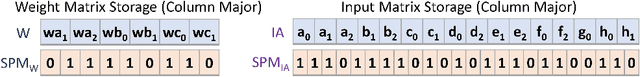

Abstract:Sparse convolutional neural networks (CNNs) have gained significant traction over the past few years as sparse CNNs can drastically decrease the model size and computations, if exploited befittingly, as compared to their dense counterparts. Sparse CNNs often introduce variations in the layer shapes and sizes, which can prevent dense accelerators from performing well on sparse CNN models. Recently proposed sparse accelerators like SCNN, Eyeriss v2, and SparTen, actively exploit the two-sided or full sparsity, that is, sparsity in both weights and activations, for performance gains. These accelerators, however, either have inefficient micro-architecture, which limits their performance, have no support for non-unit stride convolutions and fully-connected (FC) layers, or suffer massively from systematic load imbalance. To circumvent these issues and support both sparse and dense models, we propose Phantom, a multi-threaded, dynamic, and flexible neural computational core. Phantom uses sparse binary mask representation to actively lookahead into sparse computations, and dynamically schedule its computational threads to maximize the thread utilization and throughput. We also generate a two-dimensional (2D) mesh architecture of Phantom neural computational cores, which we refer to as Phantom-2D accelerator, and propose a novel dataflow that supports all layers of a CNN, including unit and non-unit stride convolutions, and FC layers. In addition, Phantom-2D uses a two-level load balancing strategy to minimize the computational idling, thereby, further improving the hardware utilization. To show support for different types of layers, we evaluate the performance of the Phantom architecture on VGG16 and MobileNet. Our simulations show that the Phantom-2D accelerator attains a performance gain of 12x, 4.1x, 1.98x, and 2.36x, over dense architectures, SCNN, SparTen, and Eyeriss v2, respectively.

NeuroMAX: A High Throughput, Multi-Threaded, Log-Based Accelerator for Convolutional Neural Networks

Jul 19, 2020

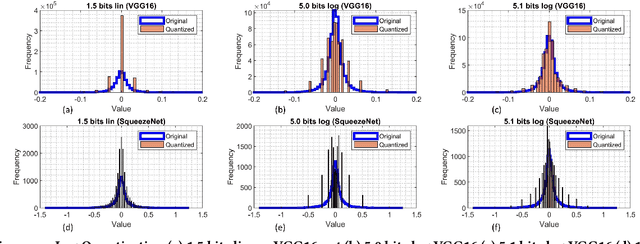

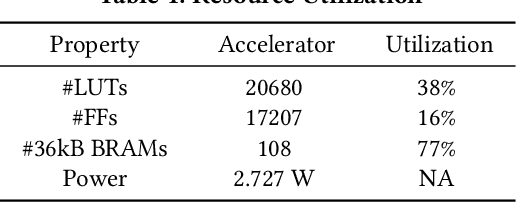

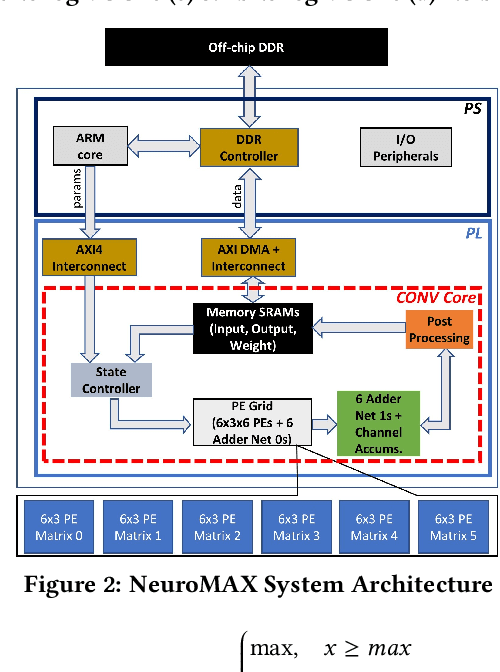

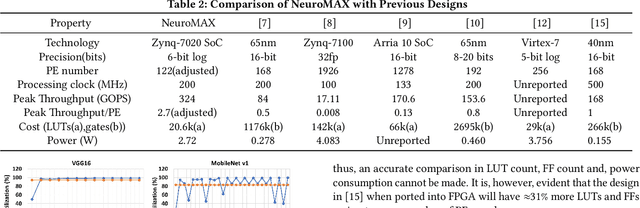

Abstract:Convolutional neural networks (CNNs) require high throughput hardware accelerators for real time applications owing to their huge computational cost. Most traditional CNN accelerators rely on single core, linear processing elements (PEs) in conjunction with 1D dataflows for accelerating convolution operations. This limits the maximum achievable ratio of peak throughput per PE count to unity. Most of the past works optimize their dataflows to attain close to a 100% hardware utilization to reach this ratio. In this paper, we introduce a high throughput, multi-threaded, log-based PE core. The designed core provides a 200% increase in peak throughput per PE count while only incurring a 6% increase in area overhead compared to a single, linear multiplier PE core with same output bit precision. We also present a 2D weight broadcast dataflow which exploits the multi-threaded nature of the PE cores to achieve a high hardware utilization per layer for various CNNs. The entire architecture, which we refer to as NeuroMAX, is implemented on Xilinx Zynq 7020 SoC at 200 MHz processing clock. Detailed analysis is performed on throughput, hardware utilization, area and power breakdown, and latency to show performance improvement compared to previous FPGA and ASIC designs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge