Ludovic De Villelongue

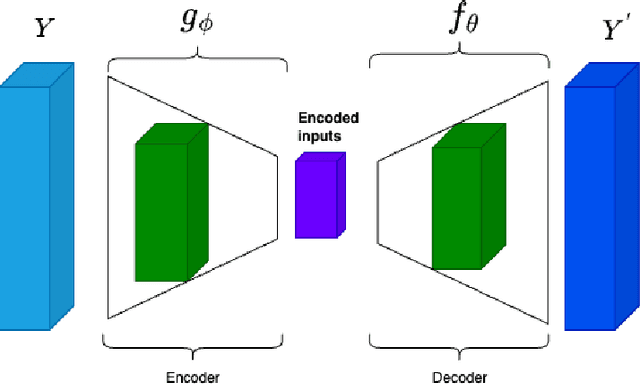

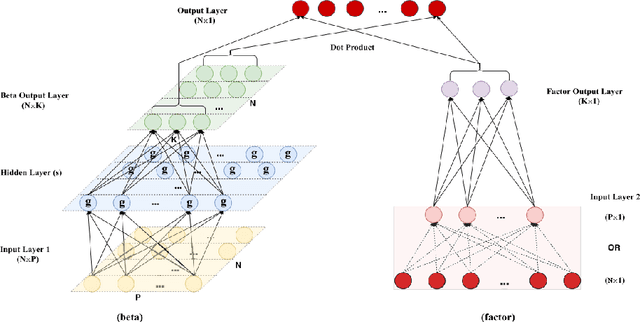

An Attention Free Conditional Autoencoder For Anomaly Detection in Cryptocurrencies

Apr 20, 2023

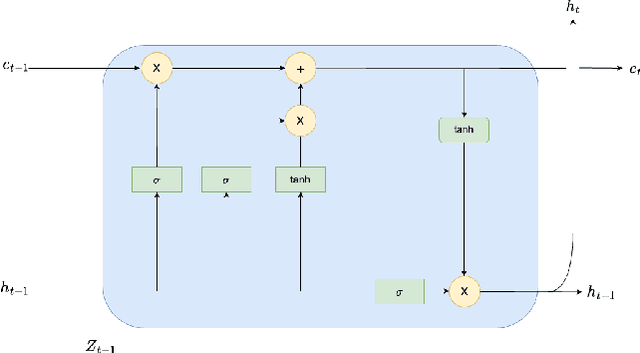

Abstract:It is difficult to identify anomalies in time series, especially when there is a lot of noise. Denoising techniques can remove the noise but this technique can cause a significant loss of information. To detect anomalies in the time series we have proposed an attention free conditional autoencoder (AF-CA). We started from the autoencoder conditional model on which we added an Attention-Free LSTM layer \cite{inzirillo2022attention} in order to make the anomaly detection capacity more reliable and to increase the power of anomaly detection. We compared the results of our Attention Free Conditional Autoencoder with those of an LSTM Autoencoder and clearly improved the explanatory power of the model and therefore the detection of anomaly in noisy time series.

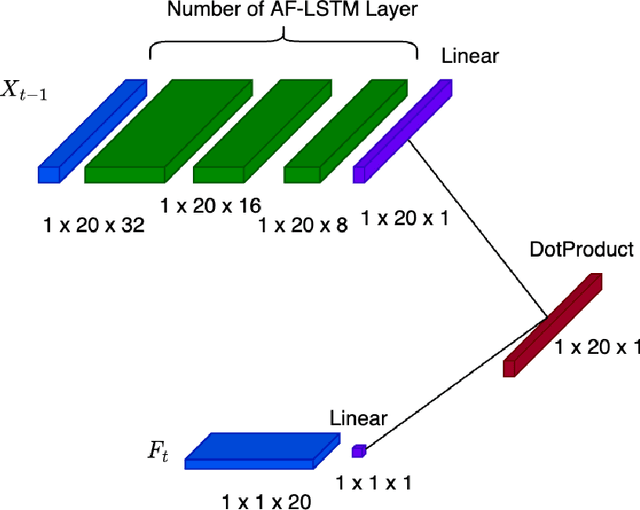

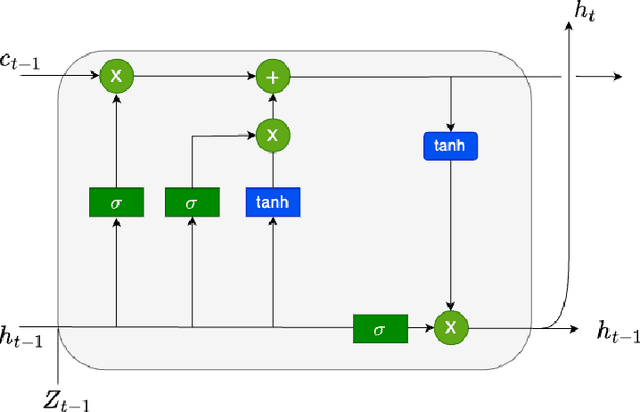

An Attention Free Long Short-Term Memory for Time Series Forecasting

Sep 20, 2022

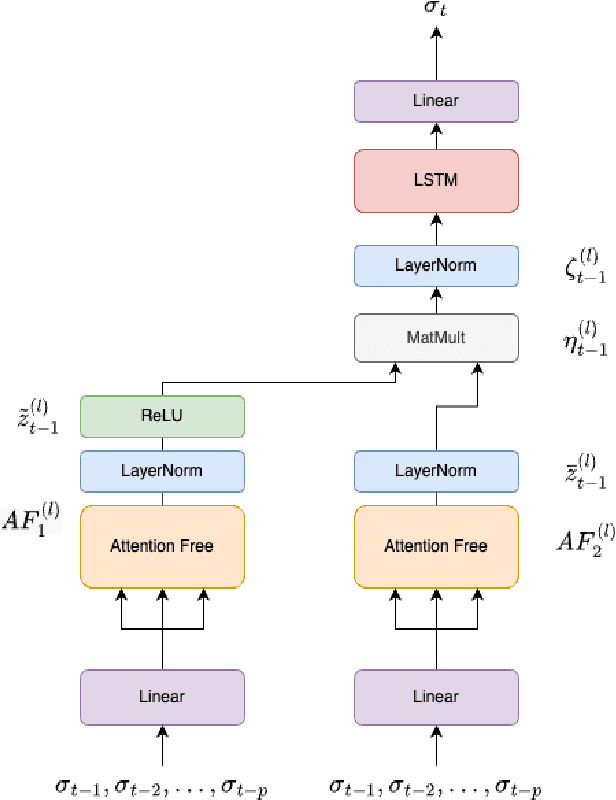

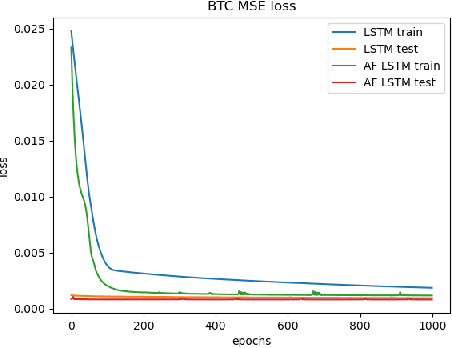

Abstract:Deep learning is playing an increasingly important role in time series analysis. We focused on time series forecasting using attention free mechanism, a more efficient framework, and proposed a new architecture for time series prediction for which linear models seem to be unable to capture the time dependence. We proposed an architecture built using attention free LSTM layers that overcome linear models for conditional variance prediction. Our findings confirm the validity of our model, which also allowed to improve the prediction capacity of a LSTM, while improving the efficiency of the learning task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge