Khaled R Ahmed

FUME: Fused Unified Multi-Gas Emission Network for Livestock Rumen Acidosis Detection

Jan 13, 2026Abstract:Ruminal acidosis is a prevalent metabolic disorder in dairy cattle causing significant economic losses and animal welfare concerns. Current diagnostic methods rely on invasive pH measurement, limiting scalability for continuous monitoring. We present FUME (Fused Unified Multi-gas Emission Network), the first deep learning approach for rumen acidosis detection from dual-gas optical imaging under in vitro conditions. Our method leverages complementary carbon dioxide (CO2) and methane (CH4) emission patterns captured by infrared cameras to classify rumen health into Healthy, Transitional, and Acidotic states. FUME employs a lightweight dual-stream architecture with weight-shared encoders, modality-specific self-attention, and channel attention fusion, jointly optimizing gas plume segmentation and classification of dairy cattle health. We introduce the first dual-gas OGI dataset comprising 8,967 annotated frames across six pH levels with pixel-level segmentation masks. Experiments demonstrate that FUME achieves 80.99% mIoU and 98.82% classification accuracy while using only 1.28M parameters and 1.97G MACs--outperforming state-of-the-art methods in segmentation quality with 10x lower computational cost. Ablation studies reveal that CO2 provides the primary discriminative signal and dual-task learning is essential for optimal performance. Our work establishes the feasibility of gas emission-based livestock health monitoring, paving the way for practical, in vitro acidosis detection systems. Codes are available at https://github.com/taminulislam/fume.

WeedSense: Multi-Task Learning for Weed Segmentation, Height Estimation, and Growth Stage Classification

Aug 20, 2025Abstract:Weed management represents a critical challenge in agriculture, significantly impacting crop yields and requiring substantial resources for control. Effective weed monitoring and analysis strategies are crucial for implementing sustainable agricultural practices and site-specific management approaches. We introduce WeedSense, a novel multi-task learning architecture for comprehensive weed analysis that jointly performs semantic segmentation, height estimation, and growth stage classification. We present a unique dataset capturing 16 weed species over an 11-week growth cycle with pixel-level annotations, height measurements, and temporal labels. WeedSense leverages a dual-path encoder incorporating Universal Inverted Bottleneck blocks and a Multi-Task Bifurcated Decoder with transformer-based feature fusion to generate multi-scale features and enable simultaneous prediction across multiple tasks. WeedSense outperforms other state-of-the-art models on our comprehensive evaluation. On our multi-task dataset, WeedSense achieves mIoU of 89.78% for segmentation, 1.67cm MAE for height estimation, and 99.99% accuracy for growth stage classification while maintaining real-time inference at 160 FPS. Our multitask approach achieves 3$\times$ faster inference than sequential single-task execution and uses 32.4% fewer parameters. Please see our project page at weedsense.github.io.

GasTwinFormer: A Hybrid Vision Transformer for Livestock Methane Emission Segmentation and Dietary Classification in Optical Gas Imaging

Aug 20, 2025Abstract:Livestock methane emissions represent 32% of human-caused methane production, making automated monitoring critical for climate mitigation strategies. We introduce GasTwinFormer, a hybrid vision transformer for real-time methane emission segmentation and dietary classification in optical gas imaging through a novel Mix Twin encoder alternating between spatially-reduced global attention and locally-grouped attention mechanisms. Our architecture incorporates a lightweight LR-ASPP decoder for multi-scale feature aggregation and enables simultaneous methane segmentation and dietary classification in a unified framework. We contribute the first comprehensive beef cattle methane emission dataset using OGI, containing 11,694 annotated frames across three dietary treatments. GasTwinFormer achieves 74.47% mIoU and 83.63% mF1 for segmentation while maintaining exceptional efficiency with only 3.348M parameters, 3.428G FLOPs, and 114.9 FPS inference speed. Additionally, our method achieves perfect dietary classification accuracy (100%), demonstrating the effectiveness of leveraging diet-emission correlations. Extensive ablation studies validate each architectural component, establishing GasTwinFormer as a practical solution for real-time livestock emission monitoring. Please see our project page at gastwinformer.github.io.

Real Time Deep Learning Weapon Detection Techniques for Mitigating Lone Wolf Attacks

May 23, 2024Abstract:Firearm Shootings and stabbings attacks are intense and result in severe trauma and threat to public safety. Technology is needed to prevent lone-wolf attacks without human supervision. Hence designing an automatic weapon detection using deep learning, is an optimized solution to localize and detect the presence of weapon objects using Neural Networks. This research focuses on both unified and II-stage object detectors whose resultant model not only detects the presence of weapons but also classifies with respective to its weapon classes, including handgun, knife, revolver, and rifle, along with person detection. This research focuses on (You Look Only Once) family and Faster RCNN family for model validation and training. Pruning and Ensembling techniques were applied to YOLOv5 to enhance their speed and performance. models achieve the highest score of 78% with an inference speed of 8.1ms. However, Faster R-CNN models achieve the highest AP 89%.

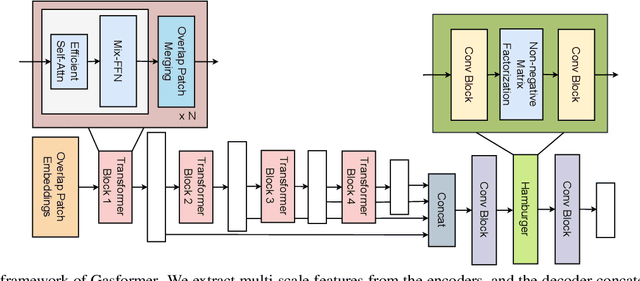

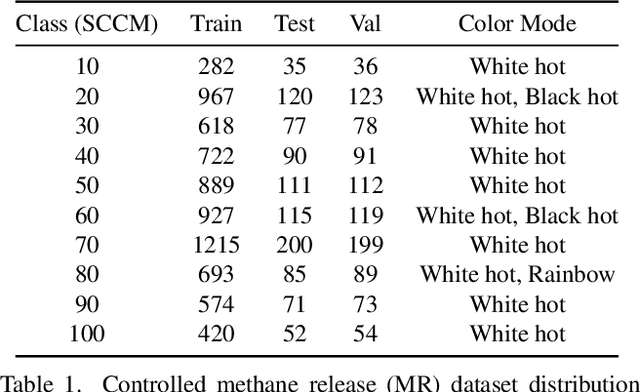

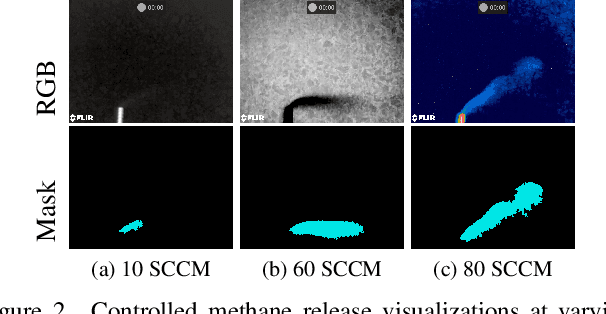

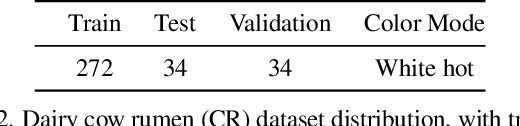

Gasformer: A Transformer-based Architecture for Segmenting Methane Emissions from Livestock in Optical Gas Imaging

Apr 16, 2024

Abstract:Methane emissions from livestock, particularly cattle, significantly contribute to climate change. Effective methane emission mitigation strategies are crucial as the global population and demand for livestock products increase. We introduce Gasformer, a novel semantic segmentation architecture for detecting low-flow rate methane emissions from livestock, and controlled release experiments using optical gas imaging. We present two unique datasets captured with a FLIR GF77 OGI camera. Gasformer leverages a Mix Vision Transformer encoder and a Light-Ham decoder to generate multi-scale features and refine segmentation maps. Gasformer outperforms other state-of-the-art models on both datasets, demonstrating its effectiveness in detecting and segmenting methane plumes in controlled and real-world scenarios. On the livestock dataset, Gasformer achieves mIoU of 88.56%, surpassing other state-of-the-art models. Materials are available at: github.com/toqitahamid/Gasformer.

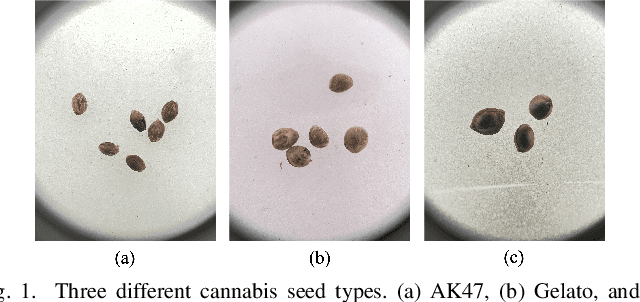

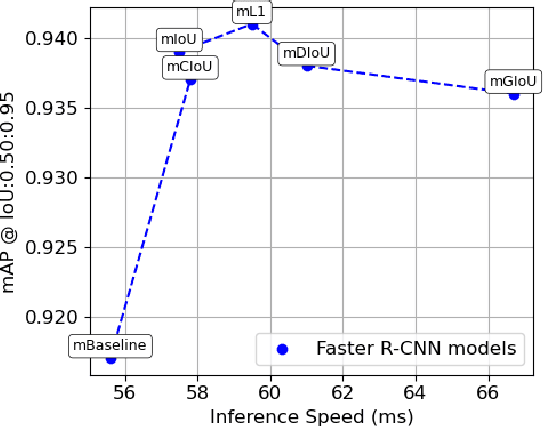

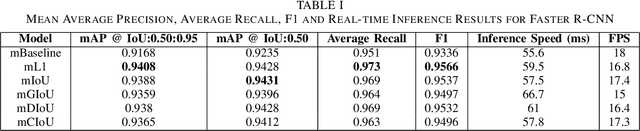

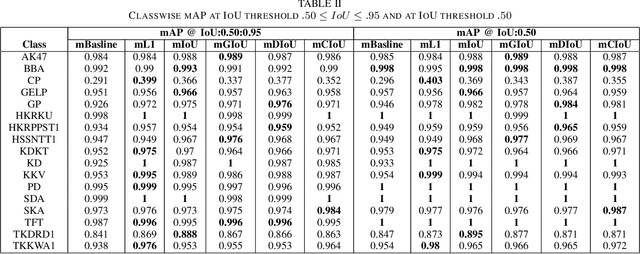

Cannabis Seed Variant Detection using Faster R-CNN

Mar 15, 2024

Abstract:Analyzing and detecting cannabis seed variants is crucial for the agriculture industry. It enables precision breeding, allowing cultivators to selectively enhance desirable traits. Accurate identification of seed variants also ensures regulatory compliance, facilitating the cultivation of specific cannabis strains with defined characteristics, ultimately improving agricultural productivity and meeting diverse market demands. This paper presents a study on cannabis seed variant detection by employing a state-of-the-art object detection model Faster R-CNN. This study implemented the model on a locally sourced cannabis seed dataset in Thailand, comprising 17 distinct classes. We evaluate six Faster R-CNN models by comparing performance on various metrics and achieving a mAP score of 94.08\% and an F1 score of 95.66\%. This paper presents the first known application of deep neural network object detection models to the novel task of visually identifying cannabis seed types.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge