José A. Font

NRSurNN3dq4: A Deep Learning Powered Numerical Relativity Surrogate for Binary Black Hole Waveforms

Dec 09, 2024Abstract:Gravitational wave approximants are widely used tools in gravitational-wave astronomy. They allow for dense coverage of the parameter space of binary black hole (BBH) mergers for purposes of parameter inference, or, more generally, match filtering tasks, while avoiding the computationally expensive full evolution of numerical relativity simulations. However, this comes at a slight cost in terms of accuracy when compared to numerical relativity waveforms, depending on the approach. One way to minimize this is by constructing so-called~\textit{surrogate models} which, instead of using approximate physics or phenomenological formulae, rather interpolate within the space of numerical relativity waveforms. In this work, we introduce~\texttt{NRSurNN3dq4}, a surrogate model for non-precessing BBH merger waveforms powered by neural networks. By relying on the power of deep learning, this approximant is remarkably fast and competitively accurate, as it can generate millions of waveforms in a tenth of a second, while mismatches with numerical relativity waveforms are restrained below $10^{-3}$. We implement this approximant within the~\textsc{bilby} framework for gravitational-wave parameter inference, and show that it it is suitable for parameter estimation tasks.

Convolutional Neural Networks for the classification of glitches in gravitational-wave data streams

Mar 24, 2023Abstract:We investigate the use of Convolutional Neural Networks (including the modern ConvNeXt network family) to classify transient noise signals (i.e.~glitches) and gravitational waves in data from the Advanced LIGO detectors. First, we use models with a supervised learning approach, both trained from scratch using the Gravity Spy dataset and employing transfer learning by fine-tuning pre-trained models in this dataset. Second, we also explore a self-supervised approach, pre-training models with automatically generated pseudo-labels. Our findings are very close to existing results for the same dataset, reaching values for the F1 score of 97.18% (94.15%) for the best supervised (self-supervised) model. We further test the models using actual gravitational-wave signals from LIGO-Virgo's O3 run. Although trained using data from previous runs (O1 and O2), the models show good performance, in particular when using transfer learning. We find that transfer learning improves the scores without the need for any training on real signals apart from the less than 50 chirp examples from hardware injections present in the Gravity Spy dataset. This motivates the use of transfer learning not only for glitch classification but also for signal classification.

Machine-Learning Love: classifying the equation of state of neutron stars with Transformers

Oct 15, 2022

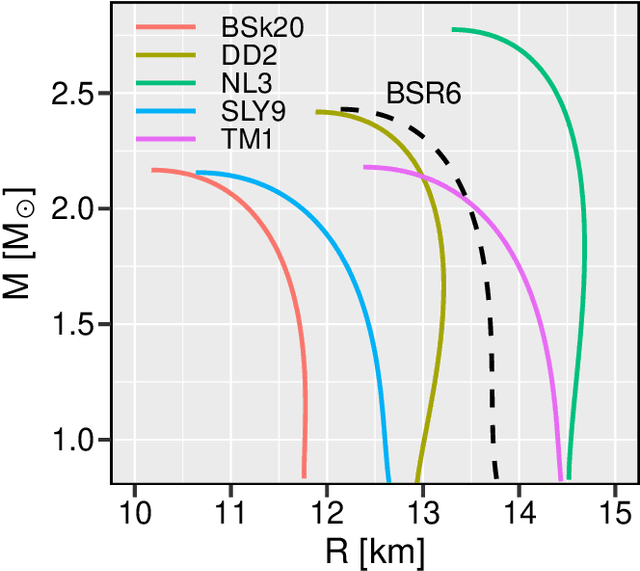

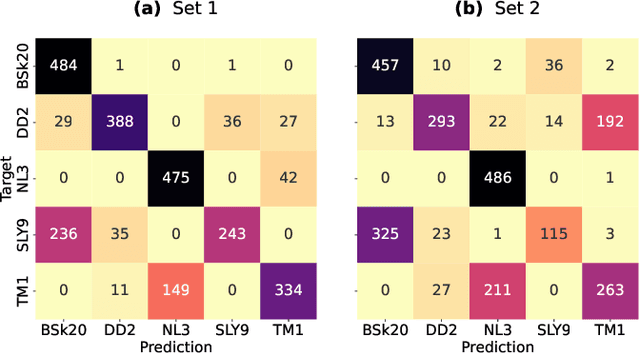

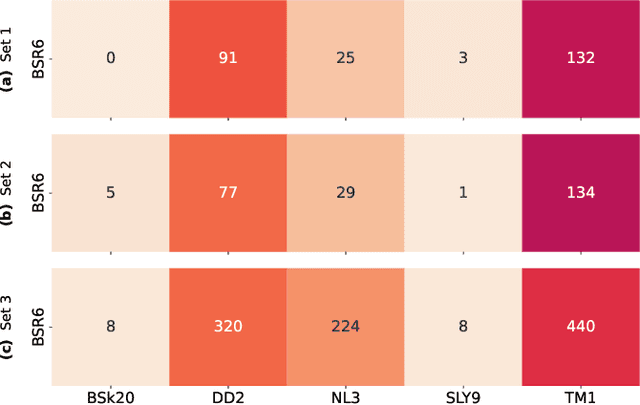

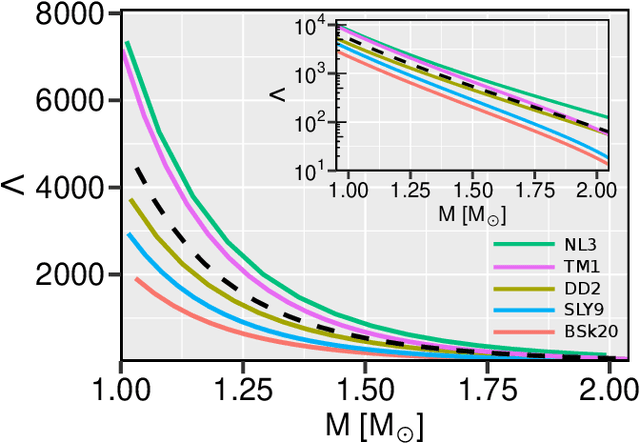

Abstract:The use of the Audio Spectrogram Transformer (AST) model for gravitational-wave data analysis is investigated. The AST machine-learning model is a convolution-free classifier that captures long-range global dependencies through a purely attention-based mechanism. In this paper a model is applied to a simulated dataset of inspiral gravitational wave signals from binary neutron star coalescences, built from five distinct, cold equations of state (EOS) of nuclear matter. From the analysis of the mass dependence of the tidal deformability parameter for each EOS class it is shown that the AST model achieves a promising performance in correctly classifying the EOS purely from the gravitational wave signals, especially when the component masses of the binary system are in the range $[1,1.5]M_{\odot}$. Furthermore, the generalization ability of the model is investigated by using gravitational-wave signals from a new EOS not used during the training of the model, achieving fairly satisfactory results. Overall, the results, obtained using the simplified setup of noise-free waveforms, show that the AST model, once trained, might allow for the instantaneous inference of the cold nuclear matter EOS directly from the inspiral gravitational-wave signals produced in binary neutron star coalescences.

Identification of Binary Neutron Star Mergers in Gravitational-Wave Data Using YOLO One-Shot Object Detection

Jul 01, 2022

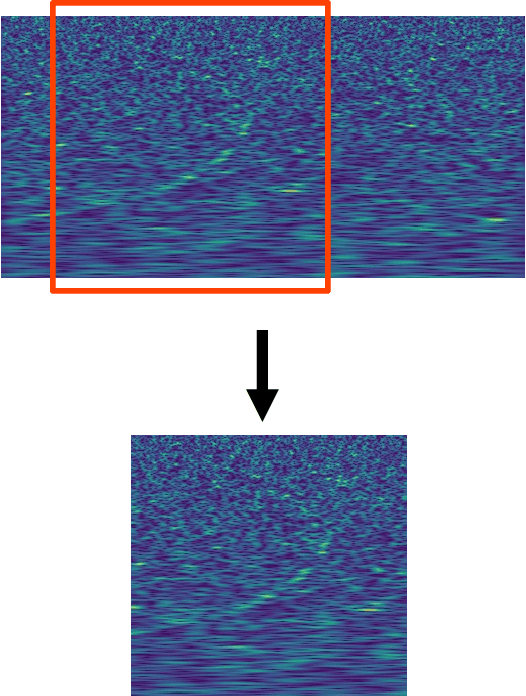

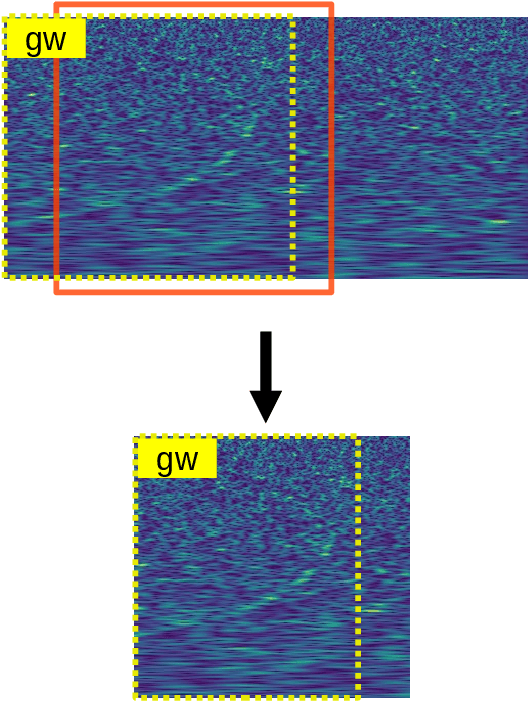

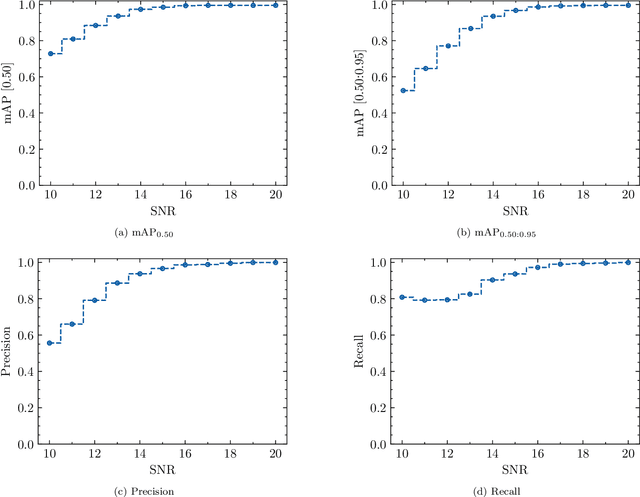

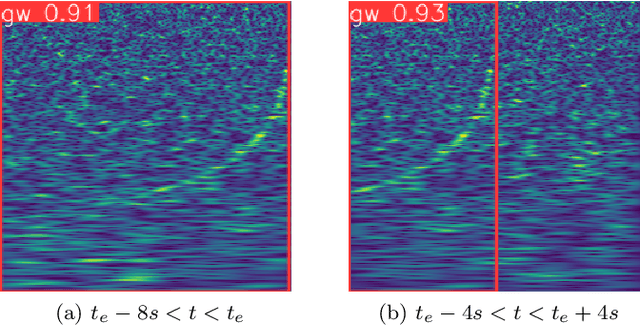

Abstract:We demonstrate the application of the YOLOv5 model, a general purpose convolution-based single-shot object detection model, in the task of detecting binary neutron star (BNS) coalescence events from gravitational-wave data of current generation interferometer detectors. We also present a thorough explanation of the synthetic data generation and preparation tasks based on approximant waveform models used for the model training, validation and testing steps. Using this approach, we achieve mean average precision ($\text{mAP}_{[0.50]}$) values of 0.945 for a single class validation dataset and as high as 0.978 for test datasets. Moreover, the trained model is successful in identifying the GW170817 event in the LIGO H1 detector data. The identification of this event is also possible for the LIGO L1 detector data with an additional pre-processing step, without the need of removing the large glitch in the final stages of the inspiral. The detection of the GW190425 event is less successful, which attests to performance degradation with the signal-to-noise ratio. Our study indicates that the YOLOv5 model is an interesting approach for first-stage detection alarm pipelines and, when integrated in more complex pipelines, for real-time inference of physical source parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge