Jonni Virtema

Halting Recurrent GNNs and the Graded $μ$-Calculus

May 16, 2025Abstract:Graph Neural Networks (GNNs) are a class of machine-learning models that operate on graph-structured data. Their expressive power is intimately related to logics that are invariant under graded bisimilarity. Current proposals for recurrent GNNs either assume that the graph size is given to the model, or suffer from a lack of termination guarantees. In this paper, we propose a halting mechanism for recurrent GNNs. We prove that our halting model can express all node classifiers definable in graded modal mu-calculus, even for the standard GNN variant that is oblivious to the graph size. A recent breakthrough in the study of the expressivity of graded modal mu-calculus in the finite suggests that conversely, restricted to node classifiers definable in monadic second-order logic, recurrent GNNs can express only node classifiers definable in graded modal mu-calculus. To prove our main result, we develop a new approximate semantics for graded mu-calculus, which we believe to be of independent interest. We leverage this new semantics into a new model-checking algorithm, called the counting algorithm, which is oblivious to the graph size. In a final step we show that the counting algorithm can be implemented on a halting recurrent GNN.

Graph Neural Networks and Arithmetic Circuits

Feb 27, 2024

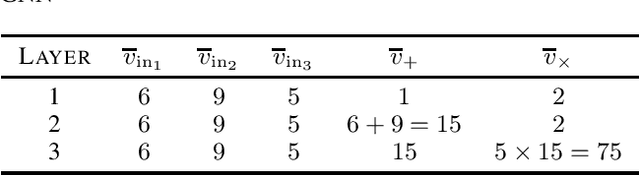

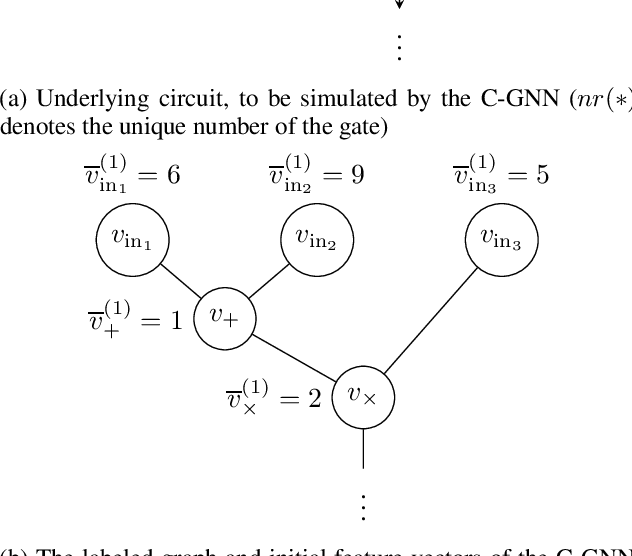

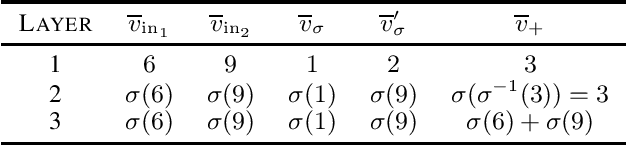

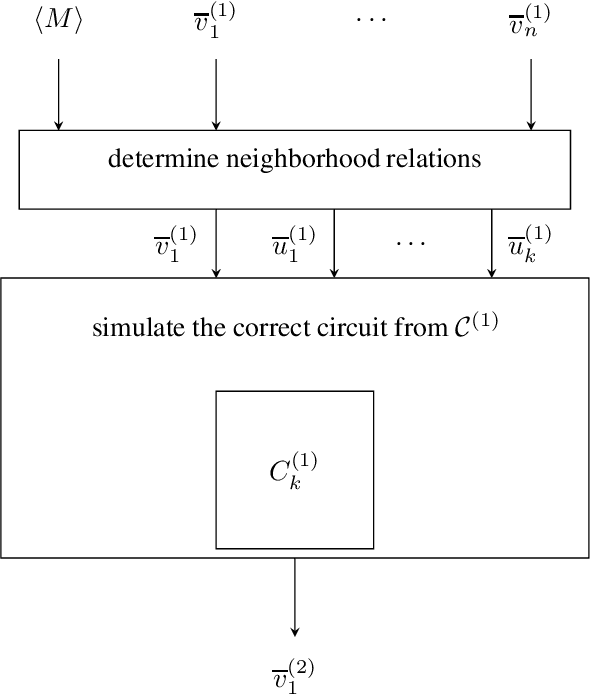

Abstract:We characterize the computational power of neural networks that follow the graph neural network (GNN) architecture, not restricted to aggregate-combine GNNs or other particular types. We establish an exact correspondence between the expressivity of GNNs using diverse activation functions and arithmetic circuits over real numbers. In our results the activation function of the network becomes a gate type in the circuit. Our result holds for families of constant depth circuits and networks, both uniformly and non-uniformly, for all common activation functions.

Complexity of Neural Network Training and ETR: Extensions with Effectively Continuous Functions

May 19, 2023Abstract:We study the complexity of the problem of training neural networks defined via various activation functions. The training problem is known to be existsR-complete with respect to linear activation functions and the ReLU activation function. We consider the complexity of the problem with respect to the sigmoid activation function and other effectively continuous functions. We show that these training problems are polynomial-time many-one bireducible to the existential theory of the reals extended with the corresponding activation functions. In particular, we establish that the sigmoid activation function leads to the existential theory of the reals with the exponential function. It is thus open, and equivalent with the decidability of the existential theory of the reals with the exponential function, whether training neural networks using the sigmoid activation function is algorithmically solvable. In contrast, we obtain that the training problem is undecidable if sinusoidal activation functions are considered. Finally, we obtain general upper bounds for the complexity of the training problem in the form of low levels of the arithmetical hierarchy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge