Jayasheree Kalpathy-Cramer

Distribution-Free Federated Learning with Conformal Predictions

Oct 14, 2021

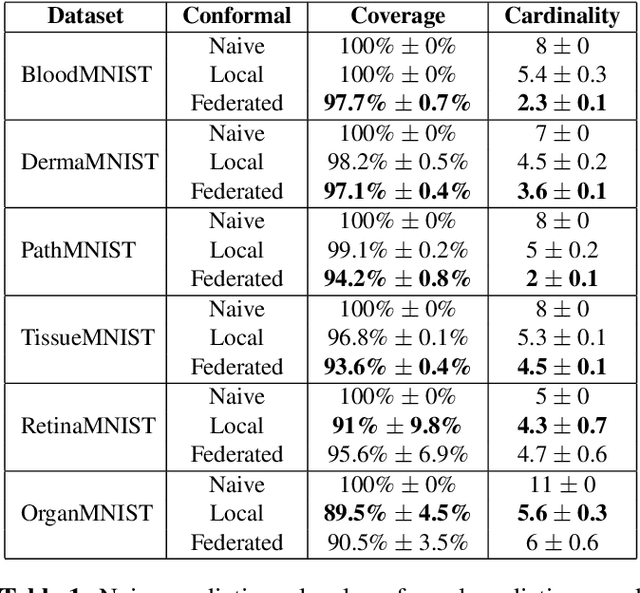

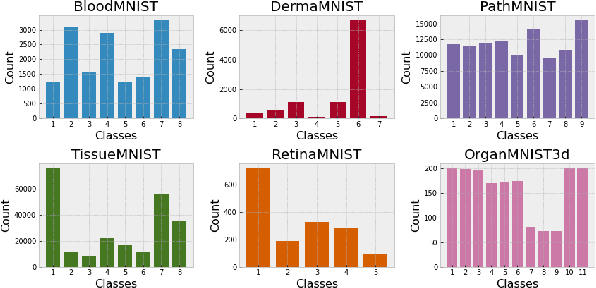

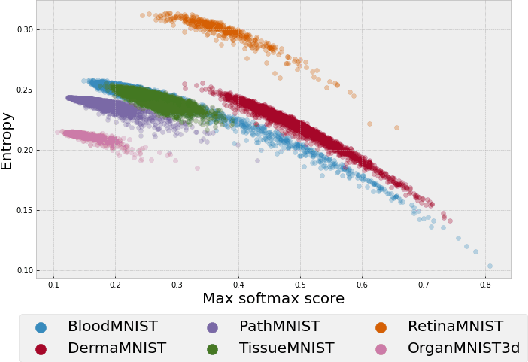

Abstract:Federated learning has attracted considerable interest for collaborative machine learning in healthcare to leverage separate institutional datasets while maintaining patient privacy. However, additional challenges such as poor calibration and lack of interpretability may also hamper widespread deployment of federated models into clinical practice and lead to user distrust or misuse of ML tools in high-stakes clinical decision-making. In this paper, we propose to address these challenges by incorporating an adaptive conformal framework into federated learning to ensure distribution-free prediction sets that provide coverage guarantees and uncertainty estimates without requiring any additional modifications to the model or assumptions. Empirical results on the MedMNIST medical imaging benchmark demonstrate our federated method provide tighter coverage in lower average cardinality over local conformal predictions on 6 different medical imaging benchmark datasets in 2D and 3D multi-class classification tasks. Further, we correlate class entropy and prediction set size to assess task uncertainty with conformal methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge