Huseyin Denli

Traversing within the Gaussian Typical Set: Differentiable Gaussianization Layers for Inverse Problems Augmented by Normalizing Flows

Dec 07, 2021

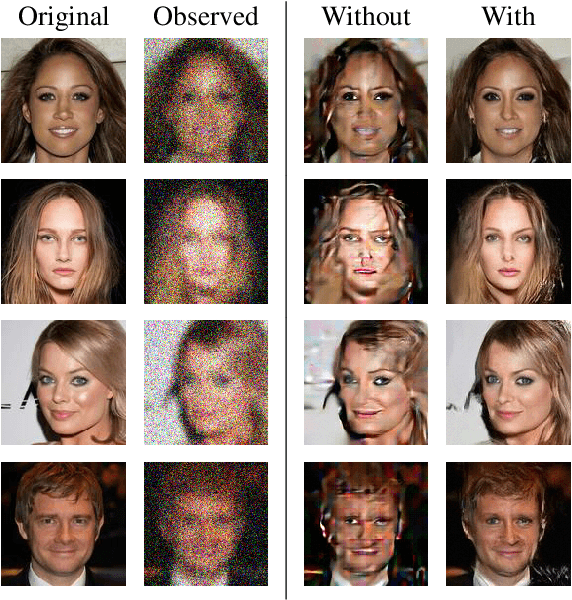

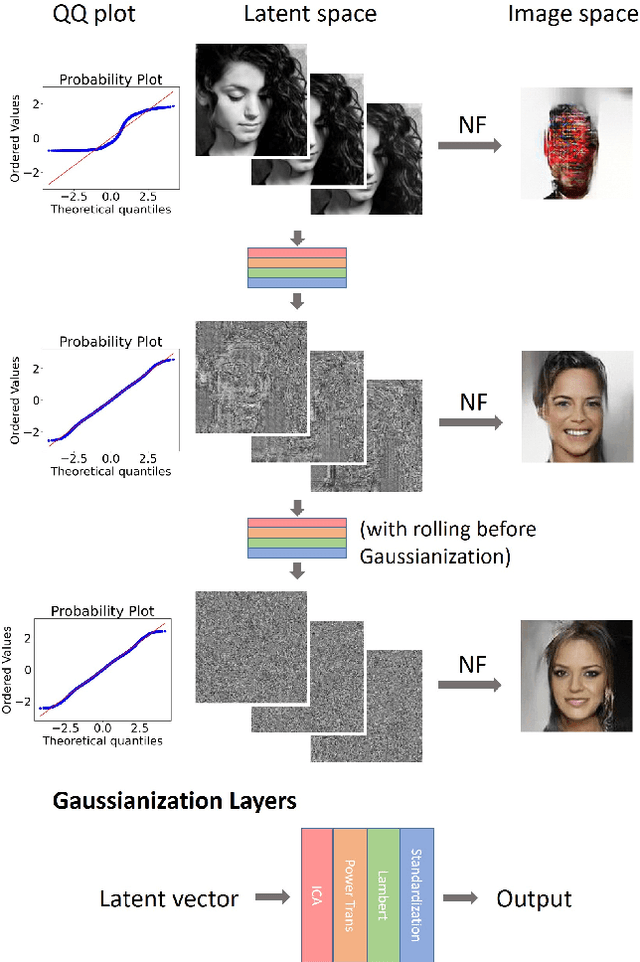

Abstract:Generative networks such as normalizing flows can serve as a learning-based prior to augment inverse problems to achieve high-quality results. However, the latent space vector may not remain a typical sample from the desired high-dimensional standard Gaussian distribution when traversing the latent space during an inversion. As a result, it can be challenging to attain a high-fidelity solution, particularly in the presence of noise and inaccurate physics-based models. To address this issue, we propose to re-parameterize and Gaussianize the latent vector using novel differentiable data-dependent layers wherein custom operators are defined by solving optimization problems. These proposed layers enforce an inversion to find a feasible solution within a Gaussian typical set of the latent space. We tested and validated our technique on an image deblurring task and eikonal tomography -- a PDE-constrained inverse problem and achieved high-fidelity results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge