Hemanth Sartachandran

How You Start Matters for Generalization

Jun 17, 2022

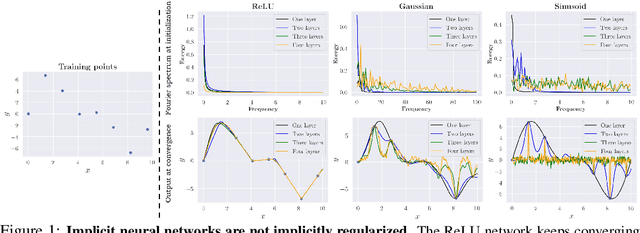

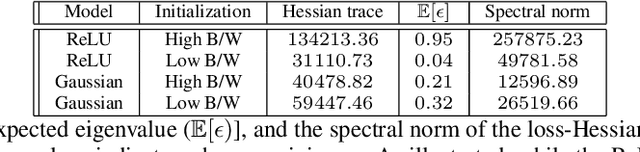

Abstract:Characterizing the remarkable generalization properties of over-parameterized neural networks remains an open problem. In this paper, we promote a shift of focus towards initialization rather than neural architecture or (stochastic) gradient descent to explain this implicit regularization. Through a Fourier lens, we derive a general result for the spectral bias of neural networks and show that the generalization of neural networks is heavily tied to their initialization. Further, we empirically solidify the developed theoretical insights using practical, deep networks. Finally, we make a case against the controversial flat-minima conjecture and show that Fourier analysis grants a more reliable framework for understanding the generalization of neural networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge