Debasmita Pal

Task-conditioned Ensemble of Expert Models for Continuous Learning

Apr 14, 2025

Abstract:One of the major challenges in machine learning is maintaining the accuracy of the deployed model (e.g., a classifier) in a non-stationary environment. The non-stationary environment results in distribution shifts and, consequently, a degradation in accuracy. Continuous learning of the deployed model with new data could be one remedy. However, the question arises as to how we should update the model with new training data so that it retains its accuracy on the old data while adapting to the new data. In this work, we propose a task-conditioned ensemble of models to maintain the performance of the existing model. The method involves an ensemble of expert models based on task membership information. The in-domain models-based on the local outlier concept (different from the expert models) provide task membership information dynamically at run-time to each probe sample. To evaluate the proposed method, we experiment with three setups: the first represents distribution shift between tasks (LivDet-Iris-2017), the second represents distribution shift both between and within tasks (LivDet-Iris-2020), and the third represents disjoint distribution between tasks (Split MNIST). The experiments highlight the benefits of the proposed method. The source code is available at https://github.com/iPRoBe-lab/Continuous_Learning_FE_DM.

A Parametric Approach to Adversarial Augmentation for Cross-Domain Iris Presentation Attack Detection

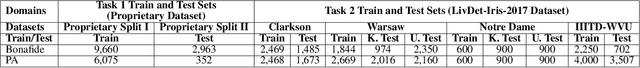

Dec 10, 2024Abstract:Iris-based biometric systems are vulnerable to presentation attacks (PAs), where adversaries present physical artifacts (e.g., printed iris images, textured contact lenses) to defeat the system. This has led to the development of various presentation attack detection (PAD) algorithms, which typically perform well in intra-domain settings. However, they often struggle to generalize effectively in cross-domain scenarios, where training and testing employ different sensors, PA instruments, and datasets. In this work, we use adversarial training samples of both bonafide irides and PAs to improve the cross-domain performance of a PAD classifier. The novelty of our approach lies in leveraging transformation parameters from classical data augmentation schemes (e.g., translation, rotation) to generate adversarial samples. We achieve this through a convolutional autoencoder, ADV-GEN, that inputs original training samples along with a set of geometric and photometric transformations. The transformation parameters act as regularization variables, guiding ADV-GEN to generate adversarial samples in a constrained search space. Experiments conducted on the LivDet-Iris 2017 database, comprising four datasets, and the LivDet-Iris 2020 dataset, demonstrate the efficacy of our proposed method. The code is available at https://github.com/iPRoBe-lab/ADV-GEN-IrisPAD.

Synthesizing Forestry Images Conditioned on Plant Phenotype Using a Generative Adversarial Network

Jul 07, 2023Abstract:Plant phenology and phenotype prediction using remote sensing data is increasingly gaining the attention of the plant science community to improve agricultural productivity. In this work, we generate synthetic forestry images that satisfy certain phenotypic attributes, viz. canopy greenness. The greenness index of plants describes a particular vegetation type in a mixed forest. Our objective is to develop a Generative Adversarial Network (GAN) to synthesize forestry images conditioned on this continuous attribute, i.e., greenness of vegetation, over a specific region of interest. The training data is based on the automated digital camera imagery provided by the National Ecological Observatory Network (NEON) and processed by the PhenoCam Network. The synthetic images generated by our method are also used to predict another phenotypic attribute, viz., redness of plants. The Structural SIMilarity (SSIM) index is utilized to assess the quality of the synthetic images. The greenness and redness indices of the generated synthetic images are compared against that of the original images using Root Mean Squared Error (RMSE) in order to evaluate their accuracy and integrity. Moreover, the generalizability and scalability of our proposed GAN model is determined by effectively transforming it to generate synthetic images for other forest sites and vegetation types.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge