Abderrahime Filali

Communication and Computation O-RAN Resource Slicing for URLLC Services Using Deep Reinforcement Learning

Feb 13, 2022

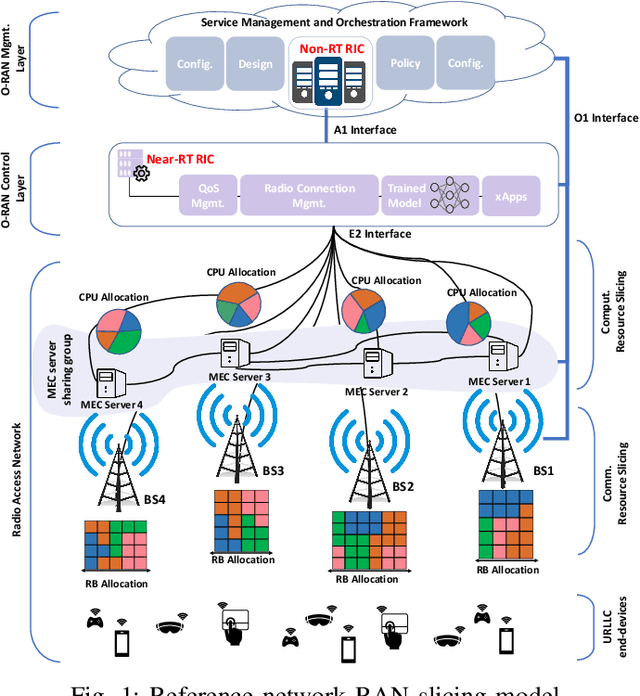

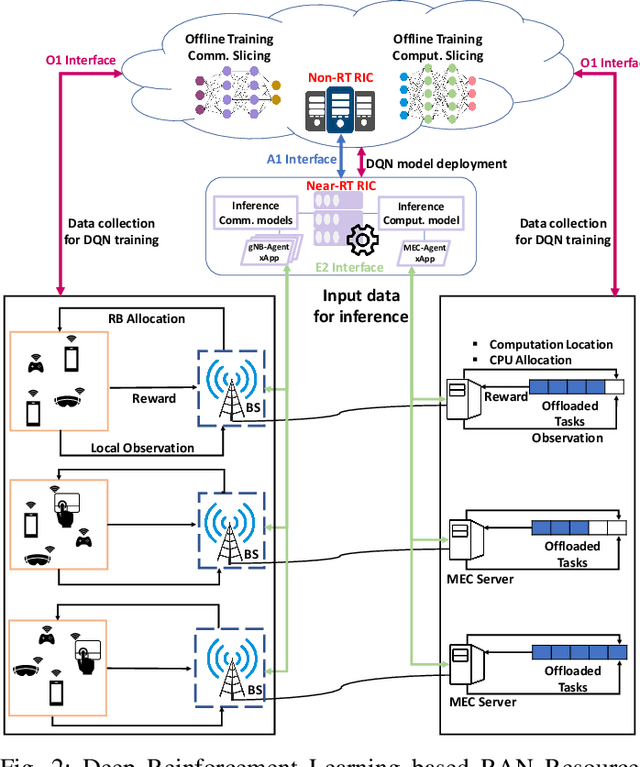

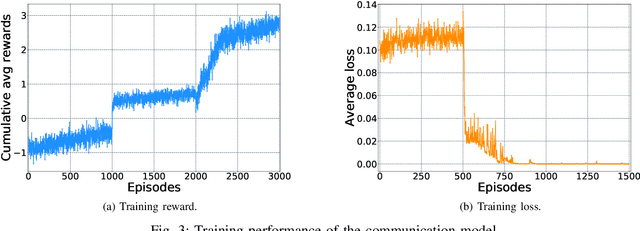

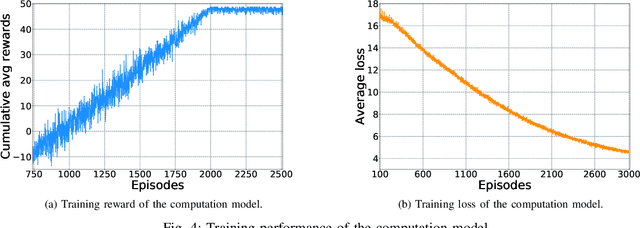

Abstract:The evolution of the future beyond-5G/6G networks towards a service-aware network is based on network slicing technology. With network slicing, communication service providers seek to meet all the requirements imposed by the verticals, including ultra-reliable low-latency communication (URLLC) services. In addition, the open radio access network (O-RAN) architecture paves the way for flexible sharing of network resources by introducing more programmability into the RAN. RAN slicing is an essential part of end-to-end network slicing since it ensures efficient sharing of communication and computation resources. However, due to the stringent requirements of URLLC services and the dynamics of the RAN environment, RAN slicing is challenging. In this article, we propose a two-level RAN slicing approach based on the O-RAN architecture to allocate the communication and computation RAN resources among URLLC end-devices. For each RAN slicing level, we model the resource slicing problem as a single-agent Markov decision process and design a deep reinforcement learning algorithm to solve it. Simulation results demonstrate the efficiency of the proposed approach in meeting the desired quality of service requirements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge