A. Mir

An enhanced KNN-based twin support vector machine with stable learning rules

Jun 22, 2019

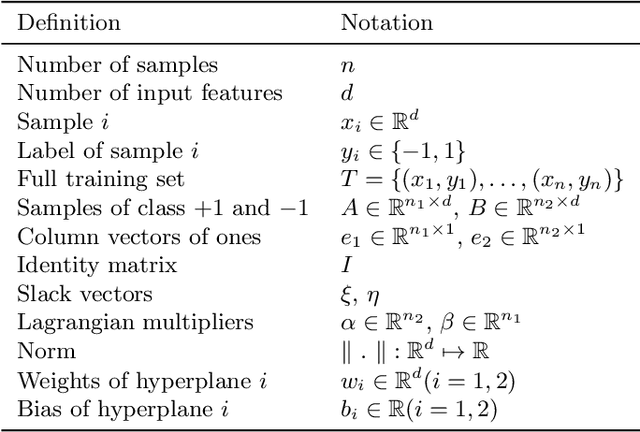

Abstract:Among the extensions of twin support vector machine (TSVM), some scholars have utilized K-nearest neighbor (KNN) graph to enhance TSVM's classification accuracy. However, these KNN-based TSVM classifiers have two major issues such as high computational cost and overfitting. In order to address these issues, this paper presents an enhanced regularized K-nearest neighbor based twin support vector machine (RKNN-TSVM). It has three additional advantages: (1) Weight is given to each sample by considering the distance from its nearest neighbors. This further reduces the effect of noise and outliers on the output model. (2) An extra stabilizer term was added to each objective function. As a result, the learning rules of the proposed method are stable. (3) To reduce the computational cost of finding KNNs for all the samples, location difference of multiple distances based k-nearest neighbors algorithm (LDMDBA) was embedded into the learning process of the proposed method. The extensive experimental results on several synthetic and benchmark datasets show the effectiveness of our proposed RKNN-TSVM in both classification accuracy and computational time. Moreover, the largest speedup in the proposed method reaches to 14 times.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge