Weakly Supervised Instance Segmentation using Motion Information via Optical Flow

Paper and Code

Feb 25, 2022

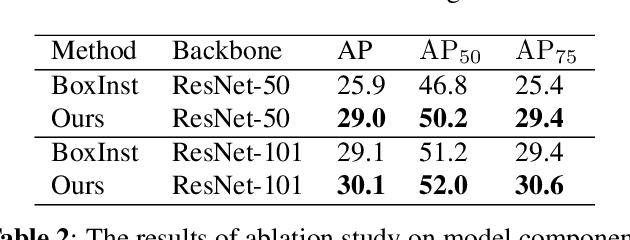

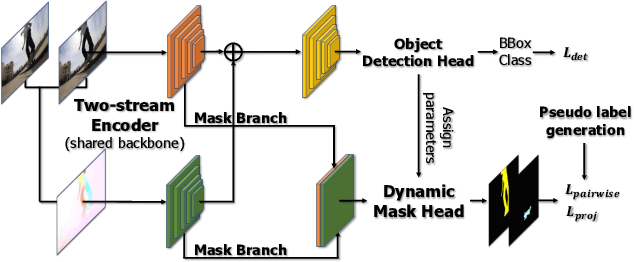

Weakly supervised instance segmentation has gained popularity because it reduces high annotation cost of pixel-level masks required for model training. Recent approaches for weakly supervised instance segmentation detect and segment objects using appearance information obtained from a static image. However, it poses the challenge of identifying objects with a non-discriminatory appearance. In this study, we address this problem by using motion information from image sequences. We propose a two-stream encoder that leverages appearance and motion features extracted from images and optical flows. Additionally, we propose a novel pairwise loss that considers both appearance and motion information to supervise segmentation. We conducted extensive evaluations on the YouTube-VIS 2019 benchmark dataset. Our results demonstrate that the proposed method improves the Average Precision of the state-of-the-art method by 3.1.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge