User-driven Intelligent Interface on the Basis of Multimodal Augmented Reality and Brain-Computer Interaction for People with Functional Disabilities

Paper and Code

Aug 15, 2017

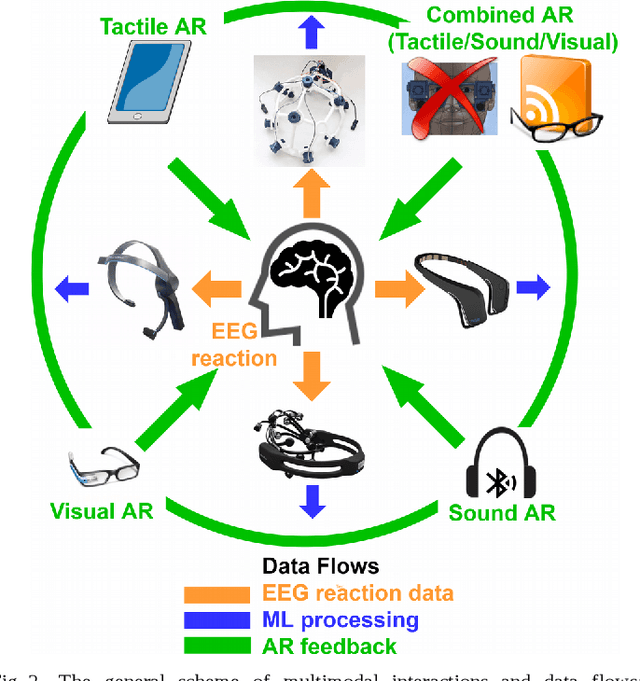

The analysis of the current integration attempts of some modes and use cases of user-machine interaction is presented. The new concept of the user-driven intelligent interface is proposed on the basis of multimodal augmented reality and brain-computer interaction for various applications: in disabilities studies, education, home care, health care, etc. The several use cases of multimodal augmentation are presented. The perspectives of the better human comprehension by the immediate feedback through neurophysical channels by means of brain-computer interaction are outlined. It is shown that brain-computer interface (BCI) technology provides new strategies to overcome limits of the currently available user interfaces, especially for people with functional disabilities. The results of the previous studies of the low end consumer and open-source BCI-devices allow us to conclude that combination of machine learning (ML), multimodal interactions (visual, sound, tactile) with BCI will profit from the immediate feedback from the actual neurophysical reactions classified by ML methods. In general, BCI in combination with other modes of AR interaction can deliver much more information than these types of interaction themselves. Even in the current state the combined AR-BCI interfaces could provide the highly adaptable and personal services, especially for people with functional disabilities.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge