Towards Explainable AI: Significance Tests for Neural Networks

Paper and Code

Feb 16, 2019

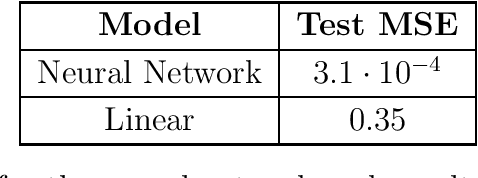

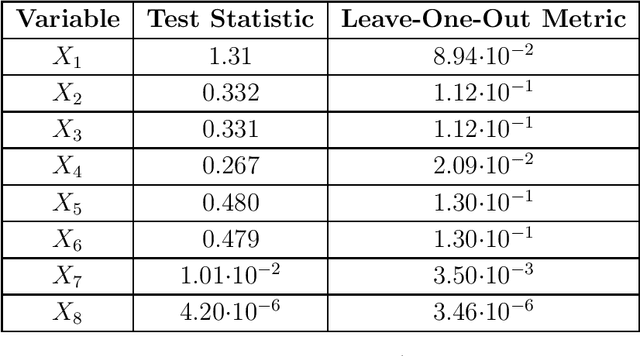

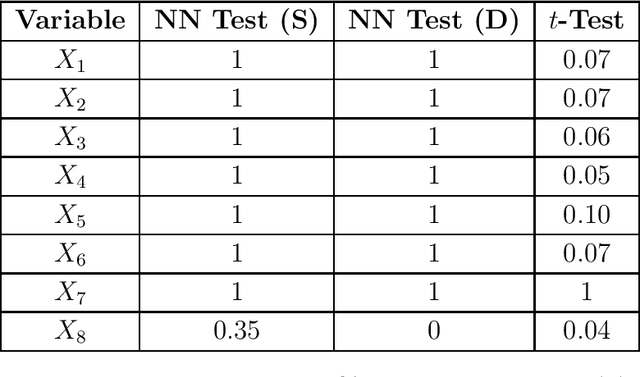

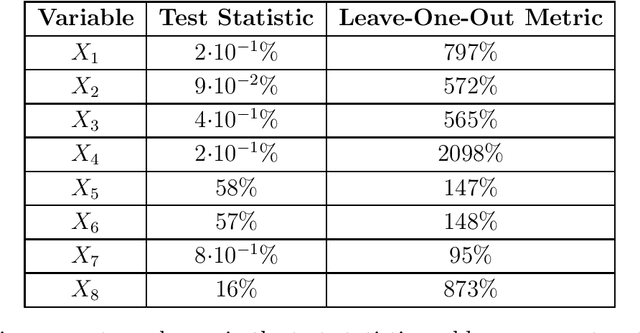

Neural networks underpin many of the best-performing AI systems. Their success is largely due to their strong approximation properties, superior predictive performance, and scalability. However, a major caveat is explainability: neural networks are often perceived as black boxes that permit little insight into how predictions are being made. We tackle this issue by developing a pivotal test to assess the statistical significance of the feature variables of a neural network. We propose a gradient-based test statistic and study its asymptotics using nonparametric techniques. The limiting distribution is given by a mixture of chi-square distributions. The tests enable one to discern the impact of individual variables on the prediction of a neural network. The test statistic can be used to rank variables according to their influence. Simulation results illustrate the computational efficiency and the performance of the test. An empirical application to house price valuation highlights the behavior of the test using actual data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge