Semi-Supervised Few-Shot Classification with Deep Invertible Hybrid Models

Paper and Code

May 22, 2021

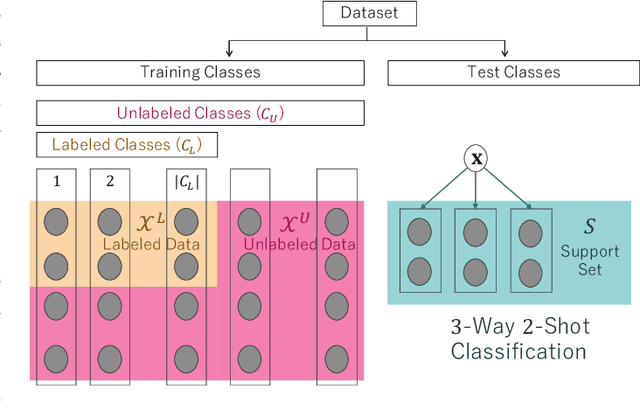

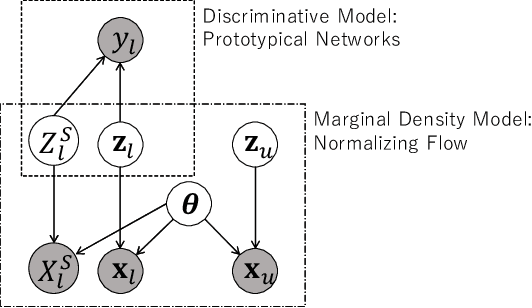

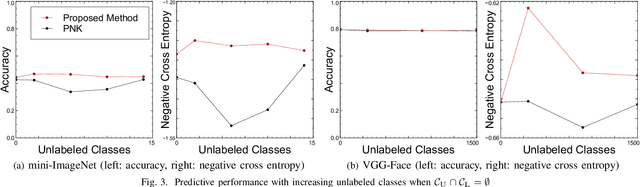

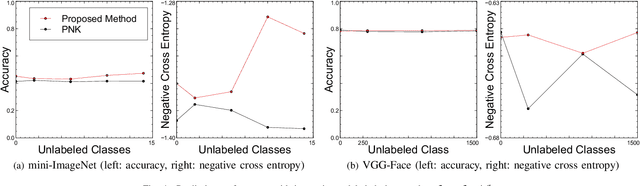

In this paper, we propose a deep invertible hybrid model which integrates discriminative and generative learning at a latent space level for semi-supervised few-shot classification. Various tasks for classifying new species from image data can be modeled as a semi-supervised few-shot classification, which assumes a labeled and unlabeled training examples and a small support set of the target classes. Predicting target classes with a few support examples per class makes the learning task difficult for existing semi-supervised classification methods, including selftraining, which iteratively estimates class labels of unlabeled training examples to learn a classifier for the training classes. To exploit unlabeled training examples effectively, we adopt as the objective function the composite likelihood, which integrates discriminative and generative learning and suits better with deep neural networks than the parameter coupling prior, the other popular integrated learning approach. In our proposed model, the discriminative and generative models are respectively Prototypical Networks, which have shown excellent performance in various kinds of few-shot learning, and Normalizing Flow a deep invertible model which returns the exact marginal likelihood unlike the other three major methods, i.e., VAE, GAN, and autoregressive model. Our main originality lies in our integration of these components at a latent space level, which is effective in preventing overfitting. Experiments using mini-ImageNet and VGG-Face datasets show that our method outperforms selftraining based Prototypical Networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge