Segmentation Strategies in Deep Learning for Prostate Cancer Diagnosis: A Comparative Study of Mamba, SAM, and YOLO

Paper and Code

Sep 24, 2024

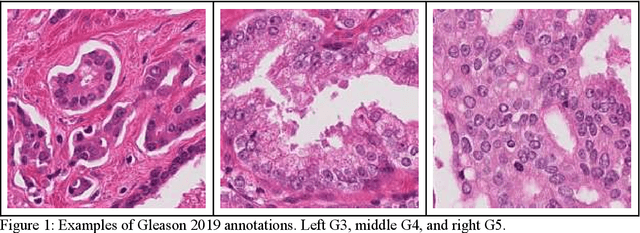

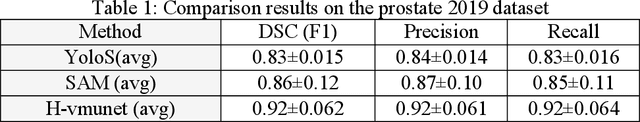

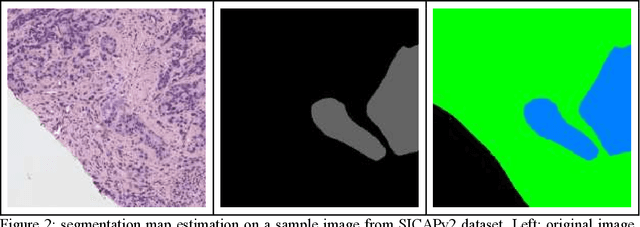

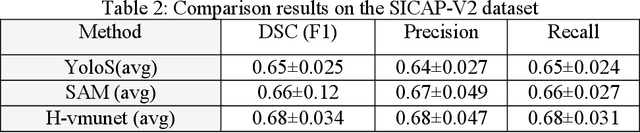

Accurate segmentation of prostate cancer histopathology images is crucial for diagnosis and treatment planning. This study presents a comparative analysis of three deep learning-based methods, Mamba, SAM, and YOLO, for segmenting prostate cancer histopathology images. We evaluated the performance of these models on two comprehensive datasets, Gleason 2019 and SICAPv2, using Dice score, precision, and recall metrics. Our results show that the High-order Vision Mamba UNet (H-vmunet) model outperforms the other two models, achieving the highest scores across all metrics on both datasets. The H-vmunet model's advanced architecture, which integrates high-order visual state spaces and 2D-selective-scan operations, enables efficient and sensitive lesion detection across different scales. Our study demonstrates the potential of the H-vmunet model for clinical applications and highlights the importance of robust validation and comparison of deep learning-based methods for medical image analysis. The findings of this study contribute to the development of accurate and reliable computer-aided diagnosis systems for prostate cancer. The code is available at http://github.com/alibdz/prostate-segmentation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge