Recursive Neural Programs: Variational Learning of Image Grammars and Part-Whole Hierarchies

Paper and Code

Jun 26, 2022

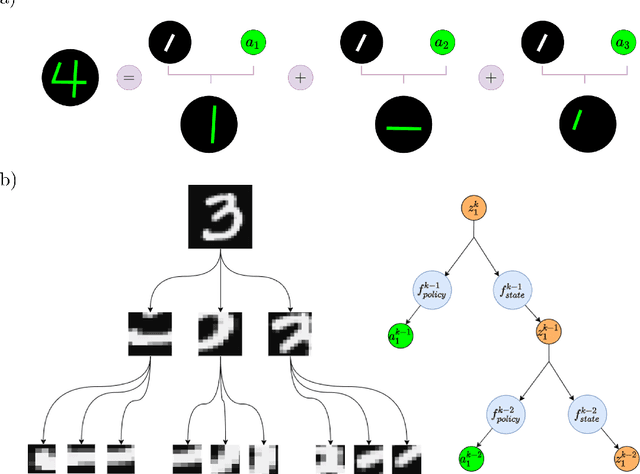

Human vision involves parsing and representing objects and scenes using structured representations based on part-whole hierarchies. Computer vision and machine learning researchers have recently sought to emulate this capability using capsule networks, reference frames and active predictive coding, but a generative model formulation has been lacking. We introduce Recursive Neural Programs (RNPs), which, to our knowledge, is the first neural generative model to address the part-whole hierarchy learning problem. RNPs model images as hierarchical trees of probabilistic sensory-motor programs that recursively reuse learned sensory-motor primitives to model an image within different reference frames, forming recursive image grammars. We express RNPs as structured variational autoencoders (sVAEs) for inference and sampling, and demonstrate parts-based parsing, sampling and one-shot transfer learning for MNIST, Omniglot and Fashion-MNIST datasets, demonstrating the model's expressive power. Our results show that RNPs provide an intuitive and explainable way of composing objects and scenes, allowing rich compositionality and intuitive interpretations of objects in terms of part-whole hierarchies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge