Quantifying multivariate redundancy with maximum entropy decompositions of mutual information

Paper and Code

Apr 03, 2018

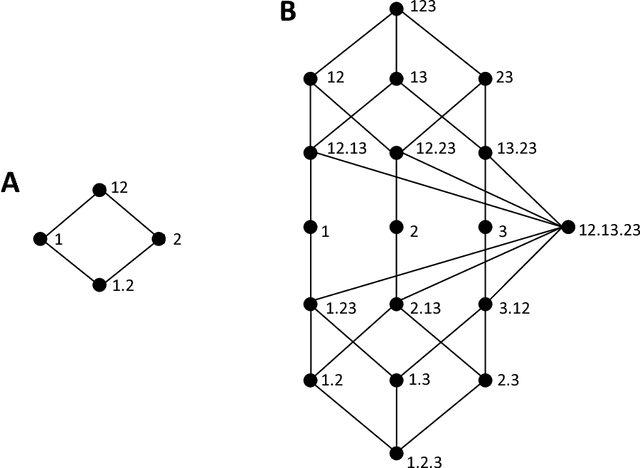

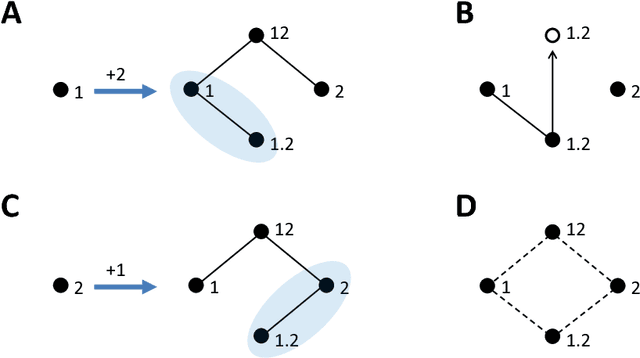

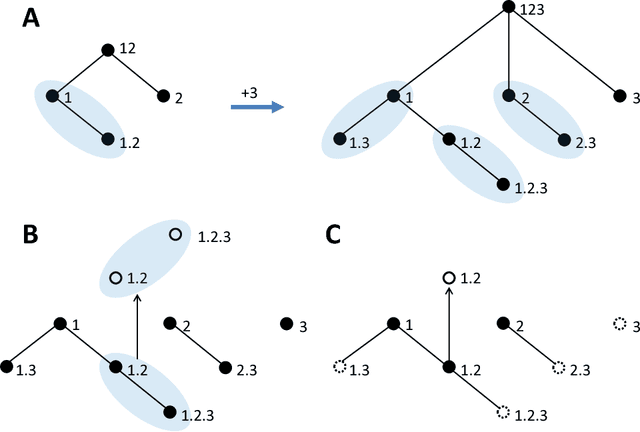

Williams and Beer (2010) proposed a nonnegative mutual information decomposition, based on the construction of redundancy lattices, which allows separating the information that a set of variables contains about a target variable into nonnegative components interpretable as the unique information of some variables not provided by others as well as redundant and synergistic components. However, the definition of multivariate measures of redundancy that comply with nonnegativity and conform to certain axioms that capture conceptually desirable properties of redundancy has proven to be elusive. We here present a procedure to determine nonnegative multivariate redundancy measures, within the maximum entropy framework. In particular, we generalize existing bivariate maximum entropy measures of redundancy and unique information, defining measures of the redundant information that a group of variables has about a target, and of the unique redundant information that a group of variables has about a target that is not redundant with information from another group. The two key ingredients for this approach are: First, the identification of a type of constraints on entropy maximization that allows isolating components of redundancy and unique redundancy by mirroring them to synergy components. Second, the construction of rooted tree-based decompositions of the mutual information, which conform to the axioms of the redundancy lattice by the local implementation at each tree node of binary unfoldings of the information using hierarchically related maximum entropy constraints. Altogether, the proposed measures quantify the different multivariate redundancy contributions of a nonnegative mutual information decomposition consistent with the redundancy lattice.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge