Online and Scalable Model Selection with Multi-Armed Bandits

Paper and Code

Jan 25, 2021

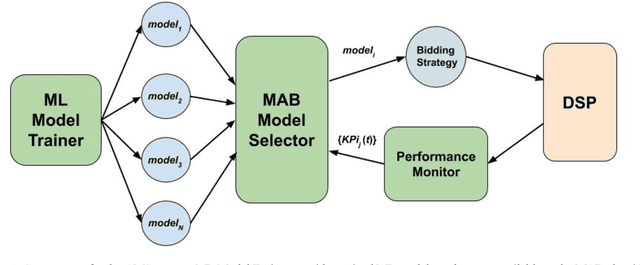

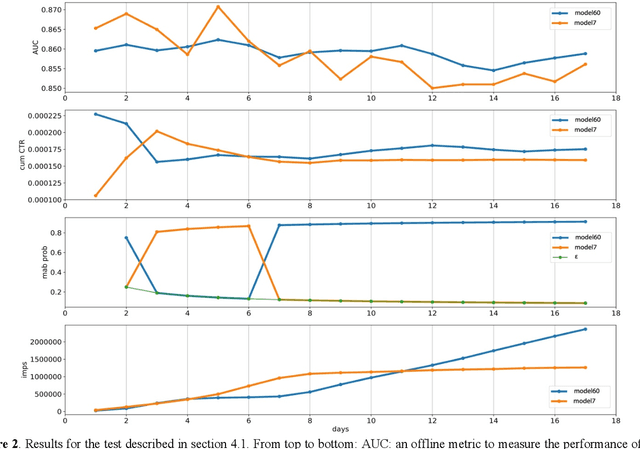

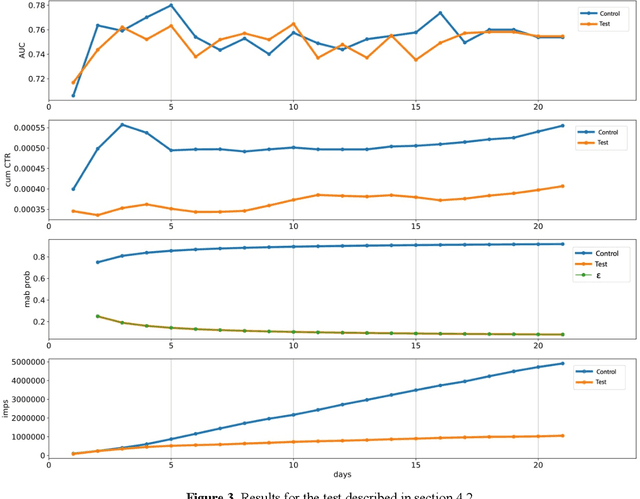

Many online applications running on live traffic are powered by machine learning models, for which training, validation, and hyper-parameter tuning are conducted on historical data. However, it is common for models demonstrating strong performance in offline analysis to yield poorer performance when deployed online. This problem is a consequence of the difficulty of training on historical data in non-stationary environments. Moreover, the machine learning metrics used for model selection may not sufficiently correlate with real-world business metrics used to determine the success of the applications being tested. These problems are particularly prominent in the Real-Time Bidding (RTB) domain, in which ML models power bidding strategies, and a change in models will likely affect performance of the advertising campaigns. In this work, we present Automatic Model Selector (AMS), a system for scalable online selection of RTB bidding strategies based on real-world performance metrics. AMS employs Multi-Armed Bandits (MAB) to near-simultaneously run and evaluate multiple models against live traffic, allocating the most traffic to the best-performing models while decreasing traffic to those with poorer online performance, thereby minimizing the impact of inferior models on overall campaign performance. The reliance on offline data is avoided, instead making model selections on a case-by-case basis according to actionable business goals. AMS allows new models to be safely introduced into live campaigns as soon as they are developed, minimizing the risk to overall performance. In live-traffic tests on multiple ad campaigns, the AMS system proved highly effective at improving ad campaign performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge