Normalisation of Weights and Firing Rates in Spiking Neural Networks with Spike-Timing-Dependent Plasticity

Paper and Code

Sep 30, 2019

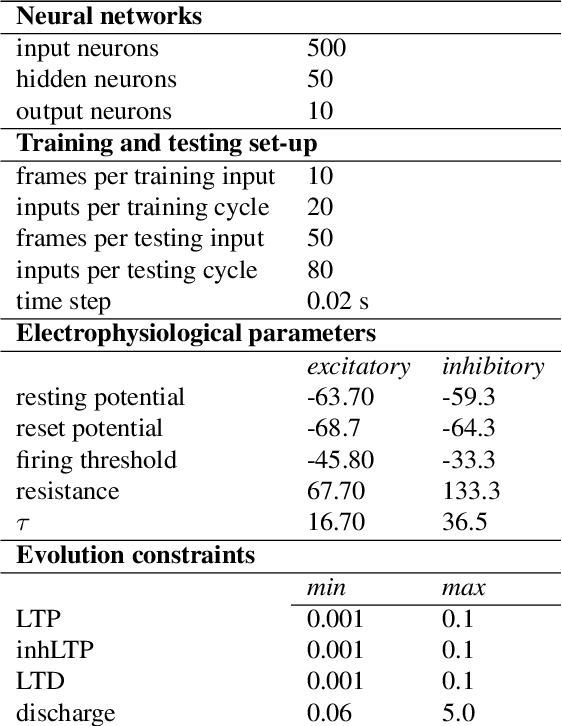

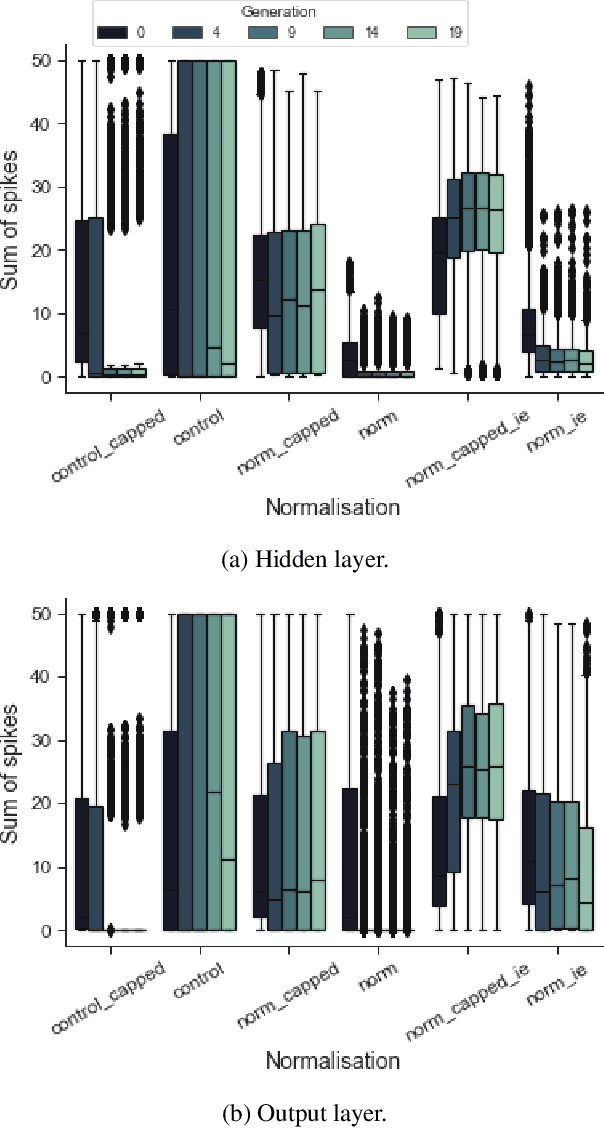

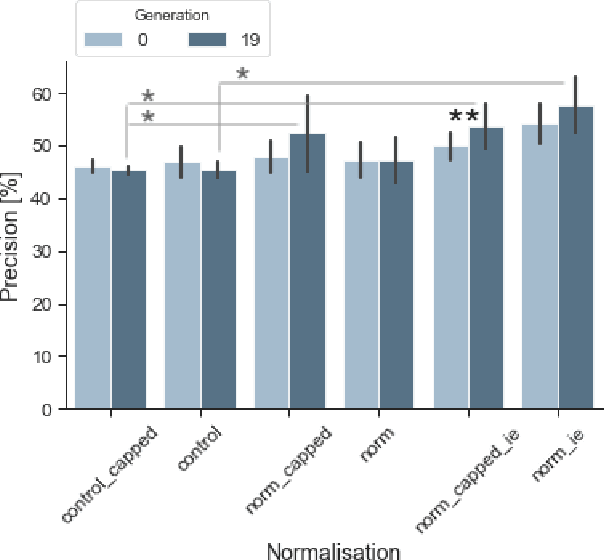

Maintaining the ability to fire sparsely is crucial for information encoding in neural networks. Additionally, spiking homeostasis is vital for spiking neural networks with changing numbers of weights and neurons. We discuss a range of network stabilisation approaches, inspired by homeostatic synaptic plasticity mechanisms reported in the brain. These include weight scaling, and weight change as a function of the network's spiking activity. We tested normalisation of the sum of weights for all neurons, and by neuron type. We examined how this approach affects firing rate and performance on clustering of time-series data in the form of moving geometric shapes. We found that neuron type-specific normalisation is a promising approach for preventing weight drift in spiking neural networks, thus enabling longer training cycles. It can be adapted for networks with architectural plasticity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge