Non-negative matrix factorization based on generalized dual divergence

Paper and Code

May 16, 2019

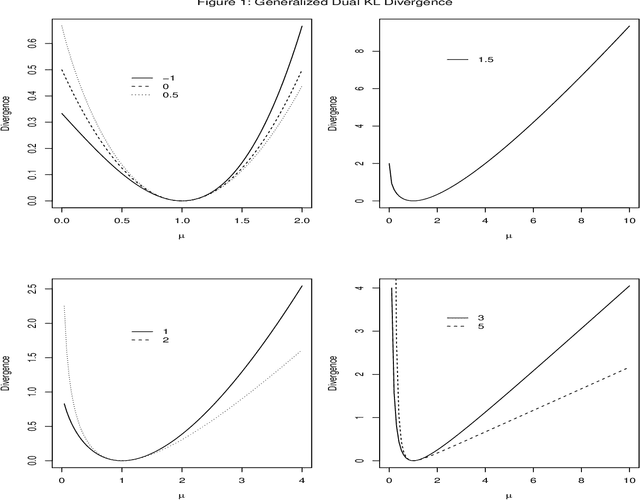

A theoretical framework for non-negative matrix factorization based on generalized dual Kullback-Leibler divergence, which includes members of the exponential family of models, is proposed. A family of algorithms is developed using this framework and its convergence proven using the Expectation-Maximization algorithm. The proposed approach generalizes some existing methods for different noise structures and contrasts with the recently proposed quasi-likelihood approach, thus providing a useful alternative for non-negative matrix factorizations. A measure to evaluate the goodness-of-fit of the resulting factorization is described. This framework can be adapted to include penalty, kernel and discriminant functions as well as tensors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge